大家可能还记得,今年五月份公布的,将由国内大佬马毅和沈向洋牵头办的全新首届AI学术会议CPAL。

这里我们再介绍一下CPAL到底是个什么会,以防有的读者时间太久有遗忘——

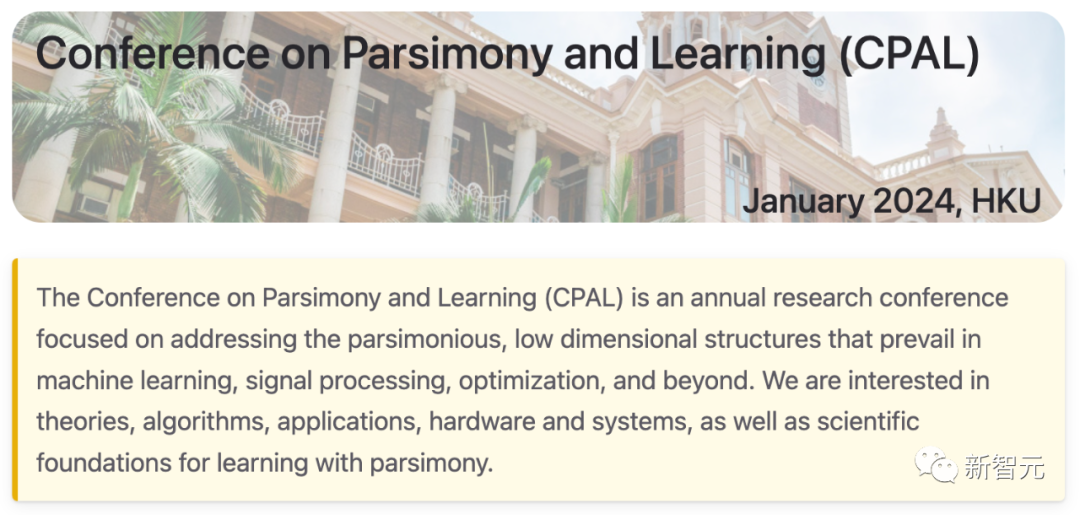

CPAL(Conference on Parsimony and Learning)名为简约学术会议,每年举办一次。

第一届CPAL将于2024年1月3日-6日,在香港大学数据科学研究院举办。

大会地址:https://cpal.cc

就像名称明示的那样,这个年度研究型学术会议注重的就是「简约」。

第一届会议一共有两个轨道(track),一个是论文集轨道(存档)和一个「最新亮点」轨道(非存档)。

图片

图片

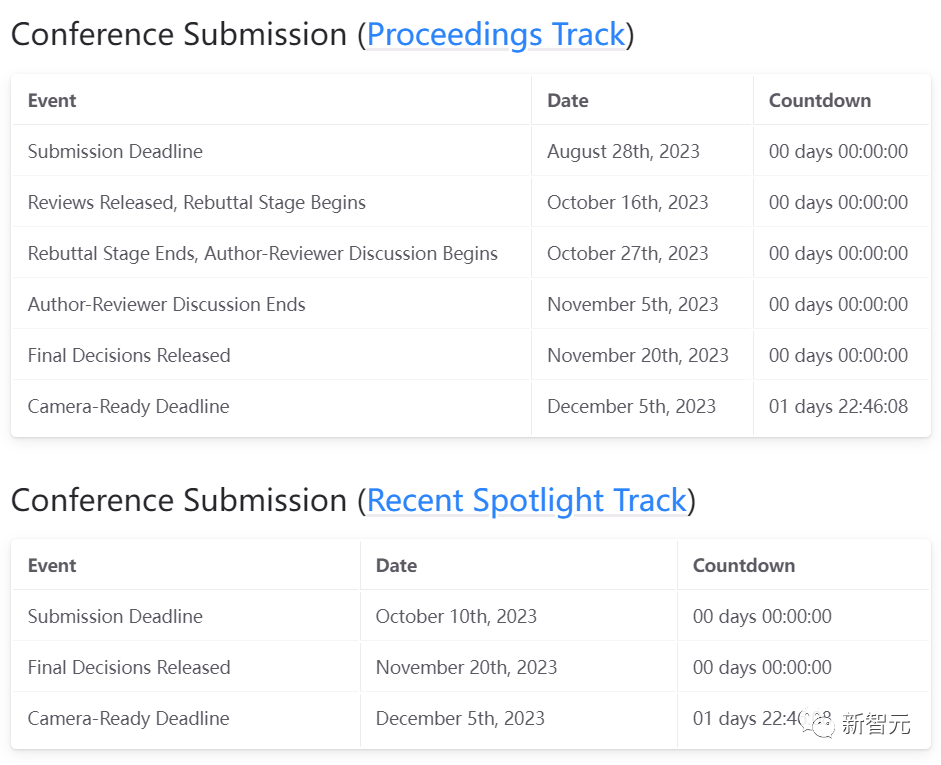

具体的时间线我们也再复习一下:

图片

图片

可以看到,论文集轨道的论文提交截止日期已经过去了三个多月,「最新亮点」轨道的论文提交截止也已经过去了一个多月。

而在刚刚过去的十一月底,大会发布了两个轨道的最终评审结果。

最终录用结果

马毅教授也在推特上发布了最终的结果:9位主讲人,16位新星奖获奖者,共接收30篇论文(论文集轨道)和60篇「最新亮点」轨道中的论文。

马教授的推特中也附上了每一部分的网址链接,点击即可跳转到相关页面。

图片

图片

30篇Oral论文

1. Less is More – Towards parsimonious multi-task models using structured sparsity

作者:Richa Upadhyay, Ronald Phlypo, Rajkumar Saini, Marcus Liwicki

关键词:Multi-task learning, structured sparsity, group sparsity, parameter pruning, semantic segmentation, depth estimation, surface normal estimation

TL;DR:我们提出了一种在多任务环境下利用动态组稀疏性开发简约模型的方法。

图片

图片

2. Closed-Loop Transcription via Convolutional Sparse Coding

作者:Xili Dai, Ke Chen, Shengbang Tong, Jingyuan Zhang, Xingjian Gao, Mingyang Li, Druv Pai, Yuexiang Zhai, Xiaojun Yuan, Heung-Yeung Shum, Lionel Ni, Yi Ma

关键词:Convolutional Sparse Coding, Inverse Problem, Closed-Loop Transcription

图片

图片

3. Leveraging Sparse Input and Sparse Models: Efficient Distributed Learning in Resource-Constrained Environments

作者:Emmanouil Kariotakis, Grigorios Tsagkatakis, Panagiotis Tsakalides, Anastasios Kyrillidis

关键词:sparse neural network training, efficient training

TL;DR:设计和研究一个系统,利用输入层和中间层的稀疏性,由资源有限的工作者以分布式方式训练和运行神经网络。

图片

图片

4. How to Prune Your Language Model: Recovering Accuracy on the "Sparsity May Cry" Benchmark

作者:Eldar Kurtic, Torsten Hoefler, Dan Alistarh

关键词:pruning, deep learning, benchmarking

TL;DR:我们提供了一套语言模型的剪枝指南,并将其应用于具有挑战性的Sparsity May Cry基准测试,以恢复准确性。

图片

图片

5. Image Quality Assessment: Integrating Model-centric and Data-centric Approaches

作者:Peibei Cao, Dingquan Li, Kede Ma

关键词:Learning-based IQA, model-centric IQA, data-centric IQA, sampling-worthiness.

图片

图片

6. Jaxpruner: A Concise Library for Sparsity Research

作者:Joo Hyung Lee, Wonpyo Park, Nicole Elyse Mitchell, Jonathan Pilault, Johan Samir Obando Ceron, Han-Byul Kim, Namhoon Lee, Elias Frantar, Yun Long, Amir Yazdanbakhsh, Woohyun Han, Shivani Agrawal, Suvinay Subramanian, Xin Wang, Sheng-Chun Kao, Xingyao Zhang, Trevor Gale, Aart J.C. Bik, Milen Ferev, Zhonglin Han, Hong-Seok Kim, Yann Dauphin, Gintare Karolina Dziugaite, Pablo Samuel Castro, Utku Evci

关键词:jax, sparsity, pruning, quantization, sparse training, efficiency, library, software

TL;DR:本文介绍了 JaxPruner,这是一个用于机器学习研究的、基于JAX的开源剪枝和稀疏训练库。

图片

图片

7. NeuroMixGDP: A Neural Collapse-Inspired Random Mixup for Private Data Release

作者:Donghao Li, Yang Cao, Yuan Yao

关键词:Neural Collapse, Differential privacy, Private data publishing, Mixup

TL;DR:本文提出了一种新颖的隐私数据发布框架,称为 NeuroMixGDP,它利用神经坍缩特征的随机混合来实现最先进的隐私-效用权衡。

图片

图片

8. Algorithm Design for Online Meta-Learning with Task Boundary Detection

作者:Daouda Sow, Sen Lin, Yingbin Liang, Junshan Zhang

关键词:online meta-learning, task boundary detection, domain shift, dynamic regret, out of distribution detection

TL;DR:我们提出了一种新的算法,用于在不知道任务边界的非稳态环境中进行与任务无关的在线元学习。

图片

图片

9. Unsupervised Learning of Structured Representation via Closed-Loop Transcription

作者:Shengbang Tong, Xili Dai, Yubei Chen, Mingyang Li, ZENGYI LI, Brent Yi, Yann LeCun, Yi Ma

关键词:Unsupervised/Self-supervised Learning, Closed-Loop Transcription

图片

图片

10. Exploring Minimally Sufficient Representation in Active Learning through Label-Irrelevant Patch Augmentation

作者:Zhiyu Xue, Yinlong Dai, Qi Lei

关键词:Active Learning, Data Augmentation, Minimally Sufficient Representation

图片

图片

11. Probing Biological and Artificial Neural Networks with Task-dependent Neural Manifolds

作者:Michael Kuoch, Chi-Ning Chou, Nikhil Parthasarathy, Joel Dapello, James J. DiCarlo, Haim Sompolinsky, SueYeon Chung

关键词:Computational Neuroscience, Neural Manifolds, Neural Geometry, Representational Geometry, Biologically inspired vision models, Neuro-AI

TL;DR:利用流形容量理论和流形对齐分析,研究和比较猕猴视觉皮层的表征和不同目标训练的DNN表征。

图片

图片

12. An Adaptive Tangent Feature Perspective of Neural Networks

作者:Daniel LeJeune, Sina Alemohammad

关键词:adaptive, kernel learning, tangent kernel, neural networks, low rank

TL;DR:具有神经网络结构的自适应特征模型,对权重矩阵施加近似低秩正则化。

图片

图片

13. Balance is Essence: Accelerating Sparse Training via Adaptive Gradient Correction

作者:Bowen Lei, Dongkuan Xu, Ruqi Zhang, Shuren He, Bani Mallick

关键词:Sparse Training, Space-time Co-efficiency, Acceleration, Stability, Gradient Correction

图片

图片

14. Deep Leakage from Model in Federated Learning

作者:Zihao Zhao, Mengen Luo, Wenbo Ding

关键词:Federated learning, distributed learning, privacy leakage

图片

图片

15. Cross-Quality Few-Shot Transfer for Alloy Yield Strength Prediction: A New Materials Science Benchmark and A Sparsity-Oriented Optimization Framework

作者:Xuxi Chen, Tianlong Chen, Everardo Yeriel Olivares, Kate Elder, Scott McCall, Aurelien Perron, Joseph McKeown, Bhavya Kailkhura, Zhangyang Wang, Brian Gallagher

关键词:AI4Science, sparsity, bi-level optimization

图片

图片

16. HRBP: Hardware-friendly Regrouping towards Block-based Pruning for Sparse CNN Training

作者:Haoyu Ma, Chengming Zhang, lizhi xiang, Xiaolong Ma, Geng Yuan, Wenkai Zhang, Shiwei Liu, Tianlong Chen, Dingwen Tao, Yanzhi Wang, Zhangyang Wang, Xiaohui Xie

关键词:efficient training, sparse training, fine-grained structured sparsity, regrouping algorithm

TL;DR:本文提出了一种新颖的细粒度结构剪枝算法,它能在前向和后向传递中加速卷积神经网络的稀疏训练。

图片

图片

17. Piecewise-Linear Manifolds for Deep Metric Learning

作者:Shubhang Bhatnagar, Narendra Ahuja

关键词:Deep metric learning, Unsupervised representation learning

图片

图片

18. Sparse Activations with Correlated Weights in Cortex-Inspired Neural Networks

作者:Chanwoo Chun, Daniel Lee

关键词:Correlated weights, Biological neural network, Cortex, Neural network gaussian process, Sparse neural network, Bayesian neural network, Generalization theory, Kernel ridge regression, Deep neural network, Random neural network

图片

图片

19. Deep Self-expressive Learning

作者:Chen Zhao, Chun-Guang Li, Wei He, Chong You

关键词:Self-Expressive Model; Subspace Clustering; Manifold Clustering

TL;DR:我们提出了一种「白盒」深度学习模型,它建立在自表达模型的基础上,具有可解释性、鲁棒性和可扩展性,适用于流形学习和聚类。

图片

图片

20. Investigating the Catastrophic Forgetting in Multimodal Large Language Model Fine-Tuning

作者:Yuexiang Zhai, Shengbang Tong, Xiao Li, Mu Cai, Qing Qu, Yong Jae Lee, Yi Ma

关键词:Multimodal LLM, Supervised Fine-Tuning, Catastrophic Forgetting

TL;DR:监督微调导致多模态大型语言模型的灾难性遗忘。

图片

图片

21. Domain Generalization via Nuclear Norm Regularization

作者:Zhenmei Shi, Yifei Ming, Ying Fan, Frederic Sala, Yingyu Liang

关键词:Domain Generalization, Nuclear Norm, Deep Learning

TL;DR:我们提出了一种简单有效的正则化方法,该方法基于所学特征的核范数,用于领域泛化。

图片

图片

22. FIXED: Frustratingly Easy Domain Generalization with Mixup

作者:Wang Lu, Jindong Wang, Han Yu, Lei Huang, Xiang Zhang, Yiqiang Chen, Xing Xie

关键词:Domain generalization, Data Augmentation, Out-of-distribution generalization

图片

图片

23. HARD: Hyperplane ARrangement Descent

作者:Tianjiao Ding, Liangzu Peng, Rene Vidal

关键词:hyperplane clustering, subspace clustering, generalized principal component analysis

图片

图片

24. Decoding Micromotion in Low-dimensional Latent Spaces from StyleGAN

作者:Qiucheng Wu, Yifan Jiang, Junru Wu, Kai Wang, Eric Zhang, Humphrey Shi, Zhangyang Wang, Shiyu Chang

关键词:generative model, low-rank decomposition

TL;DR:我们的研究表明,在StyleGAN的潜在空间中,我们可以持续找到低维潜在子空间,在这些子空间中,可以为许多有意义的变化(表示为「微情绪」)重建通用的编辑方向。

图片

图片

25. Continual Learning with Dynamic Sparse Training: Exploring Algorithms for Effective Model Updates

作者:Murat Onur Yildirim, Elif Ceren Gok, Ghada Sokar, Decebal Constantin Mocanu, Joaquin Vanschoren

关键词:continual learning, sparse neural networks, dynamic sparse training

TL;DR:我们研究了连续学习中的动态稀疏训练。

图片

图片

26. Emergence of Segmentation with Minimalistic White-Box Transformers

作者:Yaodong Yu, Tianzhe Chu, Shengbang Tong, Ziyang Wu, Druv Pai, Sam Buchanan, Yi Ma

关键词:white-box transformer, emergence of segmentation properties

TL;DR:白盒transformer只需通过极简的监督训练Recipe,就能在网络的自我注意力图谱中产生细分特性。

图片

图片

27. Efficiently Disentangle Causal Representations

作者:Yuanpeng Li, Joel Hestness, Mohamed Elhoseiny, Liang Zhao, Kenneth Church

关键词:causal representation learning

28. Sparse Fréchet sufficient dimension reduction via nonconvex optimization

作者:Jiaying Weng, Chenlu Ke, Pei Wang

关键词:Fréchet regression; minimax concave penalty; multitask regression; sufficient dimension reduction; sufficient variable selection.

29. WS-iFSD: Weakly Supervised Incremental Few-shot Object Detection Without Forgetting

作者:Xinyu Gong, Li Yin, Juan-Manuel Perez-Rua, Zhangyang Wang, Zhicheng Yan

关键词:few-shot object detection

TL;DR:我们的iFSD框架采用元学习和弱监督类别增强技术来检测基础类别和新类别中的物体,在多个基准测试中的表现明显优于最先进的方法。

图片

图片

30. PC-X: Profound Clustering via Slow Exemplars

作者:Yuangang Pan, Yinghua Yao, Ivor Tsang

关键词:Deep clustering, interpretable machine learning, Optimization

TL;DR:在本文中,我们设计了一个新的端到端框架,名为「通过慢速示例进行深度聚类」(PC-X),该框架具有内在可解释性,可普遍适用于各种类型的大规模数据集。

图片

图片

同时还有60篇「最新亮点」轨道中的论文,大家可以前往官网自行浏览:https://cpal.cc/spotlight_track/