前段时间,最大开源社区Hugging Face发布了AI聊天机器人HuggingChat,瞬间引爆全网。

网友纷纷表示,如果ChatGPT是苹果iOS系统,那么,开源版的Android就要来了。

而这次,来了个更猛的。

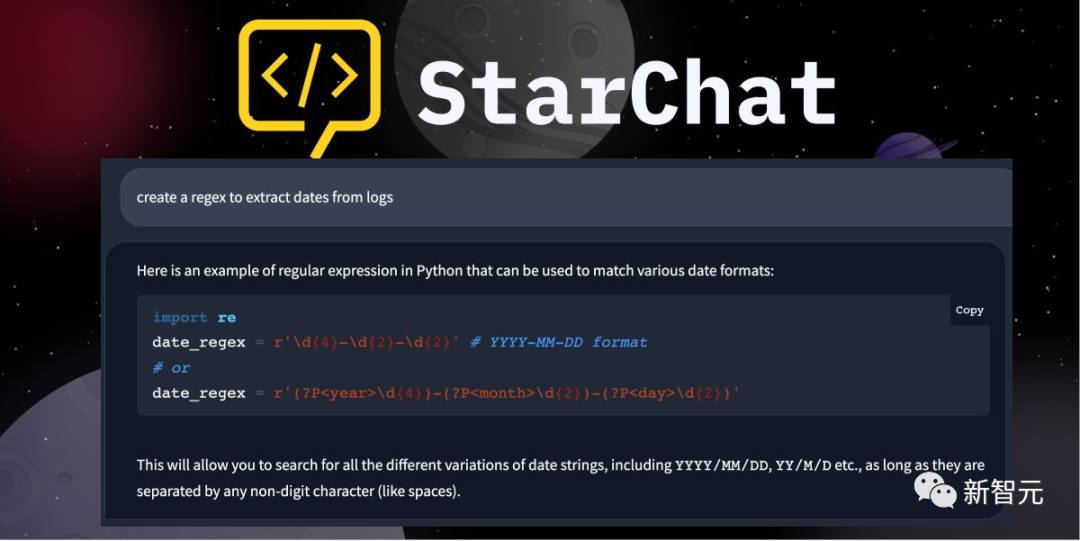

不仅上线了开源编程大语言模型StarCoder,顺便还推出了编程助手StarChat。

虽说GitHub的Copilot已经接上了GPT-4最新能力,还得每月交钱。

现在有了开源的StarChat,动动嘴编程的美事儿,每个人都能享了。

StarCode化身「动嘴编程」神器

想必,你一定用过GitHub Copilot或ChatGPT来解决编程任务,比如把代码翻译、生成等。

尽管这些专有系统的能力令人印象深刻,但通常也有缺点。其中就包括训练模型的公共数据缺乏透明度,以及无法将其适应自己的域或代码库。

这不,高质量的平替这就来了。

其中包括SalesForce的 CodeGen Mono(16B),或接受过20种编程语言的培训的Replit(3B)模型,该模型接受过20种编程语言的训练。

BigCode项目中的StarCoder是一个160亿参数的模型,它使用了80多种编程语言、GitHub问题、Git提交和Jupiter 笔记本(所有这些都获得了许可)的一万亿个token。

在这篇博文中,研究人员展示了StarCoder如何通过聊天进行微调,以创建一个性化的编码助手StarChat。

同时,还探讨了我们将探讨使用大型语言模型作为编码助手时出现的一些技术细节,包括:

-LLM如何像对话智能体一样被提示。

-OpenAI聊天标记语言(ChatML)为人类用户和 AI 助手之间的会话信息提供了一种结构化格式

-如何微调一个与Transformers和DeepSpeed ZERO-3对话的不同语料库的大型模型

提示LLM进行对话

正如DeepMind和Anthropic所展示的,LLM可以通过巧妙地选择提示而变成对话智能体。

这些提示通常涉及所谓的「系统」信息,该信息定义了LLM的特征,以及助手和用户之间的一系列对话。例如,下面是Anthropic的HHH提示的摘录(总共有高达6k的token):

可以看到,提示的第一部分 「下面是一系列...... 」与系统信息相对应,并指定助手应该有「乐于助人」和「礼貌」等特征。

然后,对话实例对模型进行了条件限制,使其遵循对话的多回合格式。

当用户提出问题时,整个提示被输入到模型,并在Assistant: 后生成一个答案。然后,答案被串联到提示中,并在每个回合中重复这一过程。令人惊讶的是,这种技术也适用于StarCoder!

这是由模型的8k标记上下文长度促进的,它允许人们包括各种各样的编程实例,并将模型转换为编码助手。下面是StarCoder提示的摘录:

由上,我们可以看到一个精心设计的提示,如何诱发与ChatGPT中观察到的类似的编码行为。

你也可以在这个链接汇总找到完整的提示。

https://huggingface.co/datasets/bigcode/ta-prompt/blob/main/TA_prompt_v1.txt

当然了,对话提示的一个主要缺点是,推理的成本很高:对话的每个回合都需要成千上万的token。

一种替代方法是,在对话语料库上对基础模型进行微调,使其变得「健谈」。

再来看看最近上传到Hub中的几个有趣的数据集,它们为今天大多数开源聊天机器人提供动力。

Chat语言模型的数据集

开源社区正在迅速地创造多样化、且强大的数据集,用于将任何基础语言模型转化为能够遵循指令的对话智能体。

就比如:

-OpenAssistant数据集,由超过4万个对话组成,是由社区成员轮流模仿用户或人工智能助手的角色。

-ShareGPT数据集,其中包含人类用户和ChatGPT之间的大约9万个对话。

而在这篇文章中,研究人员使用了OpenAssistant数据集来微调StarCoder 原始数据集的格式是对话树的集合,所以研究人员对其进行了预处理,使每一行都对应于用户和助手之间的单一对话。

为了避免偏离StarCoder预训练的数据太远,研究者还过滤了英语对话。先从Hub上下载经过处理的数据集:

正如我们所见,该数据集包含约21,000个英语会话。再来看看其中的一个训练例子。以第一个例子为例:

这看起来是有关道德哲学的有趣对话。现在,来看看如何将这些对话转换为标准格式,以简化推理时生成消息的方式。

对话的标准格式

对对话进行微调的一种方法是,在每个训练例子中简单地插入系统信息和角色,然后用一个序列末尾的token来分隔每个对话,如.。例如,上面的对话可以采取这样的形式:

这一方法,对训练来说效果不错,但对推理来说并不理想。

因为模型会自然产生不需要的转折,直到产生 <EOS> token,通常需要一些后处理来预防这种情况。

一个更吸引人的方法是使用像ChatML这样的结构化格式,它用一组特殊的token来包装每个回合,表明查询或响应的作用。在这种格式中,我们有以下的特殊标记:<|system|>:表示对话的哪一部分包含了系统信息,以调节助手的角色。<|user|>:表示该信息来自人类用户。<|assistant|>:表示信息来自于人工智能助手。<|end|>:表示一个回合或系统信息的结束。

接下来,写一个函数,用这些token来包装进行的实例,看看它是什么样子的:

这看起来是我们所需要的!下一步是将这些特殊的token纳入标记器的词汇中,所以下载StarCoder标记器并添加它们:

再检查下,看看对字符串<|assistant|>的标记是否产生一个单一的标记ID:

生效了!

掩码用户标签

特殊聊天标记的一个额外好处是,可以用它们来掩码每个对话的用户回合相关的标签的损失。

这样做的原因是为了确保模型以对话的用户部分为条件,但只训练预测助手部分(这是推理过程中真正重要的)。

下面是一个简单的函数,它将标签掩码,并将所有的用户token转换为-100,随后被损失函数忽略:

可以看到,所有的用户输入ID都被掩盖在标签中。这些特殊的token有嵌入,需要在微调过程中学习。让我们看一下其中的内容。

用DeepSpeed ZeRO-3对StarCoder进行微调

StarCoder和StarCoderBase模型有160亿参数,这意味着需要大量的GPU vRAM来微调它们。

例如,简单地以全FP32精度加载模型权重就需要大约60GB的vRAM:幸运的是,有几个选项可以用来处理这样的大模型:-使用像LoRA这样的参数效率技术,冻结基础模型的权重,并插入少量的可学习参数。

-使用DeepSpeed ZeRO-3或FSDP等方法,在多个设备上分散模型权重、优化器状态和梯度。

由于DeepSpeed紧密地集成在Transformers中,研究人员将使用它来训练模型。为了开始,首先从GitHub上克隆BigCode的StarCoder repo,并导航到 chat 目录:

接下来,使用例如Conda创建一个Python虚拟环境:

然后,安装PyTorch v1.13.1。由于这与硬件有关,研究者引导到PyTorch安装页面来进行这一步。一旦安装了它,再安装其余的项目:

需要登录到两个Hugging Face。要做到这一点,请运行:

最后,用以下方法安装Git LFS:

最终一步是启动训练!如果你足够幸运,有8个A100(80GB)GPU来运行这个摸牌行,你可以运行以下命令。训练应该需要45分钟左右:

这里的config.yaml文件指定了与数据集、模型和训练相关的所有参数。你可以在这里进行配置,可以让模型训练适应新的数据集。然后你的训练模型就可以在Hub上使用了!

编码助手StarCoder诞生

生成图表

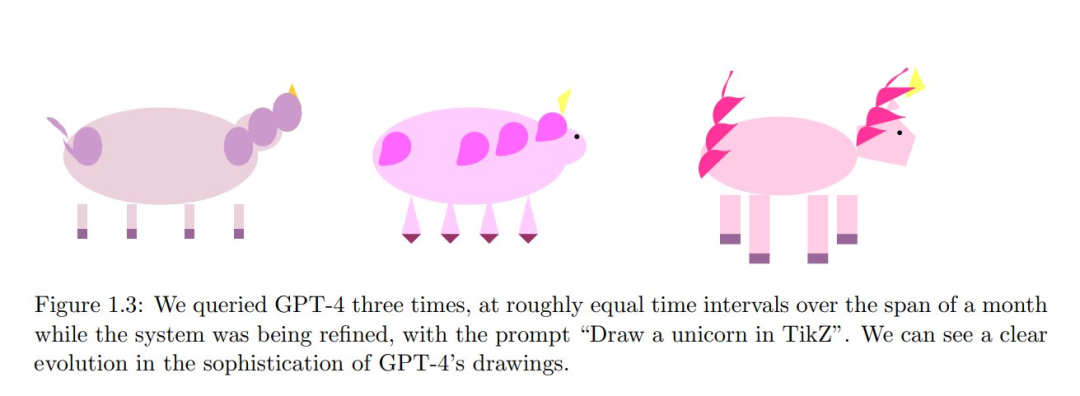

研究人员想看看自己的模型如何完成基本的可视化任务,就像GPT-4的Tikz中著名的独角兽图一样。

为了做到这一点,研究人员用一些编码任务来提示模型,并得到了很好的结果!

不得不承认,这些结果有点偷梁换柱,因为他们只选择了写出正常运行的代码,但其他的也差不了多少。

示例1:柱状图

提示:

回应:

示例2:绘图

提示:

回应:

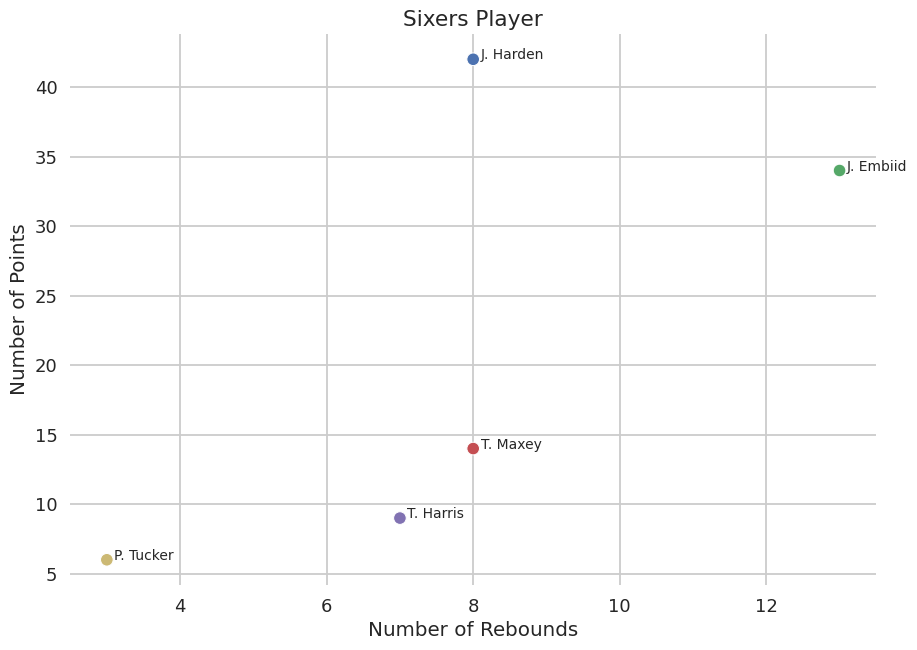

示例3:篮球

提示:

回应:

评估

评估编码助手是非常棘手的,因为研究者关心的,面向用户的指标往往不能在传统的NLP基准中衡量。

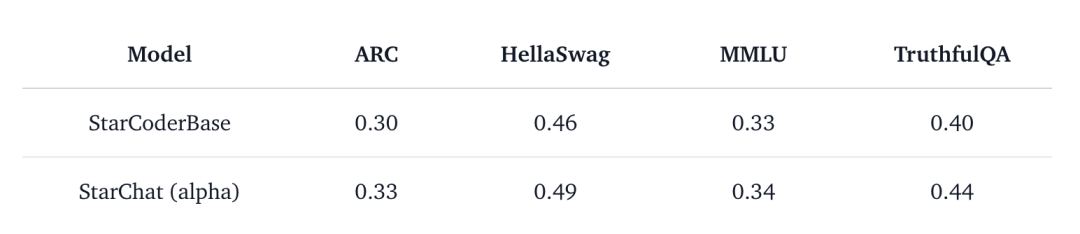

例如,研究者通过EleutherAI的语言模型评估工具运行基础和微调的StarCoderBase模型,以衡量它们在以下基准上的表现:AI2 Reasoning Challenge (ARC):小学阶段的多项选择科学问题 HellaSwag:围绕日常事件的常识性推理 MMLU:57个科目(专业和学术)的多项选择题 TruthfulQA:测试该模型从对抗性选择的不正确陈述中分离事实的能力

结果显示,微调后的模型有所改进,但不是以反映它的对话能力的方式。

那么,可以做些什么来代替对基准的自动度量呢?到目前为止,学界已经提出了两种主要方法:人工评估:向人类标签者展示为给定提示生成的输出,并按「最佳」和「最差」进行排名。这是目前用于创建InstructGPT等系统的黄金标准。

人工智能评估:向像GPT-4这样有能力的语言模型提供生成的输出和一个提示,该提示对模型的质量进行判断。这就是用来评估LMSYS的Vicuna模型的方法。

作为一个简单的实验,研究者使用ChatGPT在几种编程语言上测试StarCoder模型。

为了做到这一点,研究人员首先创建了一个有趣的提示的种子数据集,用于评估。通过用ChatGPT来启动这个过程,向它提出一些问题,例如:

或者

在第二种情况下,ChatGPT实际上产生了比要求更多的数据。

现在,这个数据集包含115条提示,主要是Python语言。四分之三的提示是要求用户提供代码的说明,四分之一的提示要求对有缺陷的代码样本进行反馈。实验中,研究者要求OpenAI的模型对答案分别进行1-8分的评分,用Vicuna代码提示的修改版来比较回答。

在这种情况下,指令调整后的StarCoder模型在95.6%的时间里取得了比基础模型更高的分数。

一个有趣的现象是,与GPT4相比,ChatGPT喜欢在范围的中间位置返回更安全的分数,而GPT4更愿意给1分和8分。

下面是一个快速的例子,说明LLM评估可以为一个给定的提示和响应对返回什么分数。

提示:

指令调整完成(助理2):

基础模型完成(助理1):

GPT4 评估:

可以将此与ChatGPT的回应进行比较,后者似乎忽略了助理1并没有真正完成任务这一事实。在它的回应中,它说第二个更好,但给了它一个较低的分数。ChatGPT评价:

这告诉我们,虽然人工智能评估中存在极其有价值的信号,但在如何与人类比较模型和校准这些结果方面,还有很多东西要学习。

局限性和未来方向

像其他许多语言模型一样,StarChat的这个alpha版本也有待解决的局限性,包括对事实产生「幻觉」的倾向,以及产生有问题的内容(特别是在被提示时)。

特别是,该模型还没有用RLHF等技术与人类的偏好相一致,也没有像ChatGPT那样用环内过滤的方式部署反应。

研究者发现,像StarCoder这样的代码生成模型可以通过OpenAssistant这样的多样化数据集转化为对话代理。

一个可能的解释是,StarCoder在代码和GitHub问题上都进行了训练,后者提供了丰富的自然语言内容的信号。

研究者称,很高兴看到社区将把StarCoder带向下一个阶段,也许它将为下一波开源助手提供动力。