Keras Python库可快速轻松地创建深度学习模型。顺序API允许您针对大多数问题逐层创建模型。它的局限性在于它不允许您创建共享图层或具有多个输入或输出的模型。Keras中的功能性API是创建模型的替代方法,它提供了更大的灵活性,包括创建更复杂的模型。

在本教程中,您将发现如何在Keras中使用更灵活的功能API来定义深度学习模型。完成本教程后,您将知道:

- 顺序API和功能API之间的区别。

- 如何使用功能性API定义简单的多层感知器,卷积神经网络和递归神经网络模型。

- 如何定义具有共享层和多个输入和输出的更复杂的模型。

教程概述

本教程分为7个部分。他们是:

- Keras顺序模型

- Keras功能模型

- 标准网络模型

- 共享层模型

- 多种输入和输出模型

- 最佳实践

- 新增:关于Functional API Python语法的说明

一. Keras顺序模型

作为回顾,Keras提供了顺序模型API。如果您不熟悉Keras或深度学习,请参阅此逐步的Keras教程。顺序模型API是一种创建深度学习模型的方法,其中创建了顺序类的实例,并创建了模型层并将其添加到模型中。例如,可以定义图层并将其作为数组传递给Sequential:

- from keras.models import Sequential

- from keras.layers import Dense

- model = Sequential([Dense(2, input_dim=1), Dense(1)])

图层也可以分段添加:

- from keras.models import Sequential

- from keras.layers import Dense

- model = Sequential()

- model.add(Dense(2, input_dim=1))

- model.add(Dense(1))

顺序模型API非常适合在大多数情况下开发深度学习模型,但它也有一些限制。例如,定义可能具有多个不同输入源,产生多个输出目标的模型或重用图层的模型并非易事。

二. Keras功能模型

Keras功能API为定义模型提供了更灵活的方法。它特别允许您定义多个输入或输出模型以及共享图层的模型。不仅如此,它还允许您定义临时非循环网络图。通过创建层实例并将它们直接成对相互连接,然后定义一个模型来指定模型,以指定这些层作为模型的输入和输出来定义模型。

让我们依次看一下Keras功能API的三个独特方面:

1.定义输入

与顺序模型不同,您必须创建并定义一个独立的输入层,以指定输入数据的形状。输入层采用形状参数,该参数是一个元组,指示输入数据的维数。如果输入数据是一维的(例如多层感知器),则形状必须明确地留出训练网络时拆分数据时使用的最小批量大小的形状的空间。因此,当输入为一维(2,)时,形状元组始终以最后一个悬挂尺寸定义,例如:

- from keras.layers import Input

- visible = Input(shape=(2,))

2.连接层

模型中的图层成对连接。这是通过在定义每个新层时指定输入来自何处来完成的。使用括号表示法,以便在创建图层之后,指定当前图层输入的来源图层。让我们用一个简短的例子来阐明这一点。我们可以如上所述创建输入层,然后创建一个隐藏层作为Dense,仅从输入层接收输入。

- from keras.layers import Input

- from keras.layers import Dense

- visible = Input(shape=(2,))

- hidden = Dense(2)(visible)

注意在创建将输入层输出作为密集层连接起来的密集层之后的(可见)层。正是这种逐层连接的方式赋予了功能性API灵活性。例如,您可以看到开始定义图层的临时图形将非常容易。

3.创建模型

创建所有模型层并将它们连接在一起之后,必须定义模型。与Sequential API一样,模型是您可以汇总,拟合,评估并用于进行预测的东西。Keras提供了一个Model类,您可以使用该类从创建的图层中创建模型。它只需要指定输入和输出层。例如:

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- visible = Input(shape=(2,))

- hidden = Dense(2)(visible)

- model = Model(inputs=visible, outputs=hidden)

现在,我们已经了解了Keras功能API的所有关键部分,下面我们来定义一组不同的模型,并进行一些实践。每个示例都是可执行的,并打印结构并创建图的图。我建议您针对自己的模型执行此操作,以明确定义的内容。我希望这些示例在将来您希望使用功能性API定义自己的模型时为您提供模板。

三.标准网络模型

使用功能性API入门时,最好先了解如何定义一些标准的神经网络模型。在本节中,我们将研究定义一个简单的多层感知器,卷积神经网络和递归神经网络。这些示例将为以后理解更详细的示例提供基础。

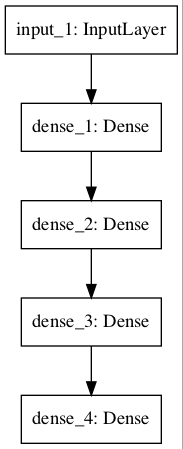

- 多层感知器

在本节中,我们定义了用于二进制分类的多层感知器模型。该模型具有10个输入,3个具有10、20和10个神经元的隐藏层以及一个具有1个输出的输出层。整流的线性激活函数用于每个隐藏层,而S形激活函数用于输出层,以进行二进制分类。

- # Multilayer Perceptron

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- visible = Input(shape=(10,))

- hidden1 = Dense(10, activation='relu')(visible)

- hidden2 = Dense(20, activation='relu')(hidden1)

- hidden3 = Dense(10, activation='relu')(hidden2)

- output = Dense(1, activation='sigmoid')(hidden3)

- model = Model(inputs=visible, outputoutputs=output)

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='multilayer_perceptron_graph.png')

输出如下:

- _________________________________________________________________

- Layer (type) Output Shape Param #

- =================================================================

- input_1 (InputLayer) (None, 10) 0

- _________________________________________________________________

- dense_1 (Dense) (None, 10) 110

- _________________________________________________________________

- dense_2 (Dense) (None, 20) 220

- _________________________________________________________________

- dense_3 (Dense) (None, 10) 210

- _________________________________________________________________

- dense_4 (Dense) (None, 1) 11

- =================================================================

- Total params: 551

- Trainable params: 551

- Non-trainable params: 0

- _________________________________________________________________

网络结构图如下:

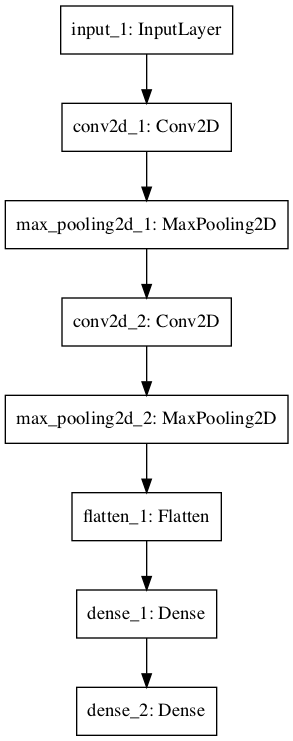

- 卷积神经网络

在本节中,我们将定义用于图像分类的卷积神经网络。该模型接收黑白64×64图像作为输入,然后具有两个卷积和池化层作为特征提取器的序列,然后是一个全连接层以解释特征,并具有一个S型激活的输出层以进行两类预测 。

- # Convolutional Neural Network

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- from keras.layers import Flatten

- from keras.layers.convolutional import Conv2D

- from keras.layers.pooling import MaxPooling2D

- visible = Input(shape=(64,64,1))

- conv1 = Conv2D(32, kernel_size=4, activation='relu')(visible)

- pool1 = MaxPooling2D(pool_size=(2, 2))(conv1)

- conv2 = Conv2D(16, kernel_size=4, activation='relu')(pool1)

- pool2 = MaxPooling2D(pool_size=(2, 2))(conv2)

- flat = Flatten()(pool2)

- hidden1 = Dense(10, activation='relu')(flat)

- output = Dense(1, activation='sigmoid')(hidden1)

- model = Model(inputs=visible, outputoutputs=output)

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='convolutional_neural_network.png')

输出如下:

- _________________________________________________________________

- Layer (type) Output Shape Param #

- =================================================================

- input_1 (InputLayer) (None, 64, 64, 1) 0

- _________________________________________________________________

- conv2d_1 (Conv2D) (None, 61, 61, 32) 544

- _________________________________________________________________

- max_pooling2d_1 (MaxPooling2 (None, 30, 30, 32) 0

- _________________________________________________________________

- conv2d_2 (Conv2D) (None, 27, 27, 16) 8208

- _________________________________________________________________

- max_pooling2d_2 (MaxPooling2 (None, 13, 13, 16) 0

- _________________________________________________________________

- flatten_1 (Flatten) (None, 2704) 0

- _________________________________________________________________

- dense_1 (Dense) (None, 10) 27050

- _________________________________________________________________

- dense_2 (Dense) (None, 1) 11

- =================================================================

- Total params: 35,813

- Trainable params: 35,813

- Non-trainable params: 0

- _________________________________________________________________

网络结构图如下:

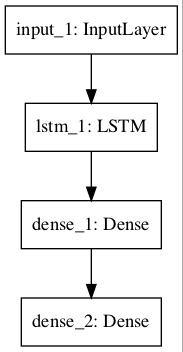

- 递归神经网络

在本节中,我们将为序列分类定义一个长短期记忆递归神经网络。该模型期望一个功能的100个时间步长作为输入。该模型具有单个LSTM隐藏层以从序列中提取特征,然后是一个完全连接的层以解释LSTM输出,然后是一个用于进行二进制预测的输出层。

- # Recurrent Neural Network

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- from keras.layers.recurrent import LSTM

- visible = Input(shape=(100,1))

- hidden1 = LSTM(10)(visible)

- hidden2 = Dense(10, activation='relu')(hidden1)

- output = Dense(1, activation='sigmoid')(hidden2)

- model = Model(inputs=visible, outputoutputs=output)

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='recurrent_neural_network.png')

输出如下:

- _________________________________________________________________

- Layer (type) Output Shape Param #

- =================================================================

- input_1 (InputLayer) (None, 100, 1) 0

- _________________________________________________________________

- lstm_1 (LSTM) (None, 10) 480

- _________________________________________________________________

- dense_1 (Dense) (None, 10) 110

- _________________________________________________________________

- dense_2 (Dense) (None, 1) 11

- =================================================================

- Total params: 601

- Trainable params: 601

- Non-trainable params: 0

- _________________________________________________________________

模型结构图如下:

四.共享层模型

多层可以共享一层的输出。例如,可能有来自输入的多个不同的特征提取层,或者可能有多个层用于解释来自特征提取层的输出。让我们看两个例子。

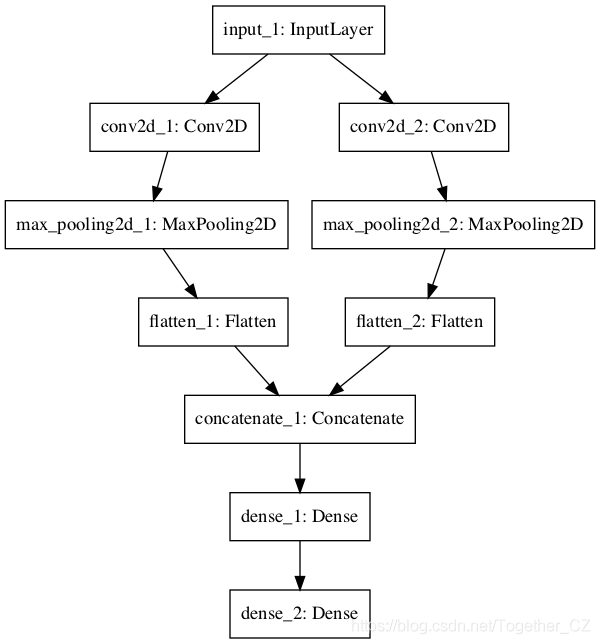

- 共享输入层

在本节中,我们定义了具有不同大小内核的多个卷积层,以解释图像输入。该模型将拍摄尺寸为64×64像素的黑白图像。有两个共享此输入的CNN特征提取子模型。第一个的内核大小为4,第二个的内核大小为8。这些特征提取子模型的输出被展平为向量,并连接为一个长向量,并传递到完全连接的层以进行解释,最后输出层完成 二进制分类。

- # Shared Input Layer

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- from keras.layers import Flatten

- from keras.layers.convolutional import Conv2D

- from keras.layers.pooling import MaxPooling2D

- from keras.layers.merge import concatenate

- # input layer

- visible = Input(shape=(64,64,1))

- # first feature extractor

- conv1 = Conv2D(32, kernel_size=4, activation='relu')(visible)

- pool1 = MaxPooling2D(pool_size=(2, 2))(conv1)

- flat1 = Flatten()(pool1)

- # second feature extractor

- conv2 = Conv2D(16, kernel_size=8, activation='relu')(visible)

- pool2 = MaxPooling2D(pool_size=(2, 2))(conv2)

- flat2 = Flatten()(pool2)

- # merge feature extractors

- merge = concatenate([flat1, flat2])

- # interpretation layer

- hidden1 = Dense(10, activation='relu')(merge)

- # prediction output

- output = Dense(1, activation='sigmoid')(hidden1)

- model = Model(inputs=visible, outputoutputs=output)

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='shared_input_layer.png')

输出如下:

- ____________________________________________________________________________________________________

- Layer (type) Output Shape Param # Connected to

- ====================================================================================================

- input_1 (InputLayer) (None, 64, 64, 1) 0

- ____________________________________________________________________________________________________

- conv2d_1 (Conv2D) (None, 61, 61, 32) 544 input_1[0][0]

- ____________________________________________________________________________________________________

- conv2d_2 (Conv2D) (None, 57, 57, 16) 1040 input_1[0][0]

- ____________________________________________________________________________________________________

- max_pooling2d_1 (MaxPooling2D) (None, 30, 30, 32) 0 conv2d_1[0][0]

- ____________________________________________________________________________________________________

- max_pooling2d_2 (MaxPooling2D) (None, 28, 28, 16) 0 conv2d_2[0][0]

- ____________________________________________________________________________________________________

- flatten_1 (Flatten) (None, 28800) 0 max_pooling2d_1[0][0]

- ____________________________________________________________________________________________________

- flatten_2 (Flatten) (None, 12544) 0 max_pooling2d_2[0][0]

- ____________________________________________________________________________________________________

- concatenate_1 (Concatenate) (None, 41344) 0 flatten_1[0][0]

- flatten_2[0][0]

- ____________________________________________________________________________________________________

- dense_1 (Dense) (None, 10) 413450 concatenate_1[0][0]

- ____________________________________________________________________________________________________

- dense_2 (Dense) (None, 1) 11 dense_1[0][0]

- ====================================================================================================

- Total params: 415,045

- Trainable params: 415,045

- Non-trainable params: 0

- ____________________________________________________________________________________________________

网络结构图如下:

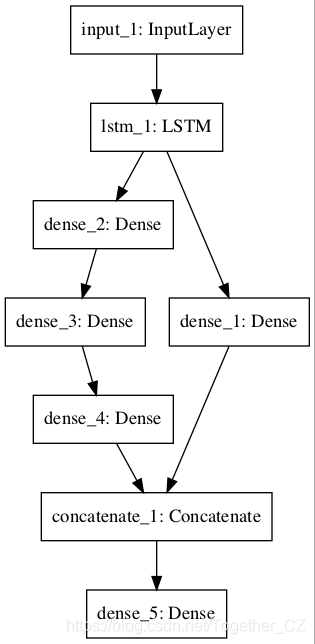

- 共享特征提取层

在本节中,我们将使用两个并行子模型来解释用于序列分类的LSTM特征提取器的输出。模型的输入是1个特征的100个时间步长。具有10个存储单元的LSTM层将解释此序列。第一个解释模型是浅的单个完全连接层,第二个解释是深的3层模型。两种解释模型的输出都连接到一个长向量中,该向量传递到用于进行二进制预测的输出层。

- # Shared Feature Extraction Layer

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- from keras.layers.recurrent import LSTM

- from keras.layers.merge import concatenate

- # define input

- visible = Input(shape=(100,1))

- # feature extraction

- extract1 = LSTM(10)(visible)

- # first interpretation model

- interp1 = Dense(10, activation='relu')(extract1)

- # second interpretation model

- interp11 = Dense(10, activation='relu')(extract1)

- interp12 = Dense(20, activation='relu')(interp11)

- interp13 = Dense(10, activation='relu')(interp12)

- # merge interpretation

- merge = concatenate([interp1, interp13])

- # output

- output = Dense(1, activation='sigmoid')(merge)

- model = Model(inputs=visible, outputoutputs=output)

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='shared_feature_extractor.png')

输出如下:

- ____________________________________________________________________________________________________

- Layer (type) Output Shape Param # Connected to

- ====================================================================================================

- input_1 (InputLayer) (None, 100, 1) 0

- ____________________________________________________________________________________________________

- lstm_1 (LSTM) (None, 10) 480 input_1[0][0]

- ____________________________________________________________________________________________________

- dense_2 (Dense) (None, 10) 110 lstm_1[0][0]

- ____________________________________________________________________________________________________

- dense_3 (Dense) (None, 20) 220 dense_2[0][0]

- ____________________________________________________________________________________________________

- dense_1 (Dense) (None, 10) 110 lstm_1[0][0]

- ____________________________________________________________________________________________________

- dense_4 (Dense) (None, 10) 210 dense_3[0][0]

- ____________________________________________________________________________________________________

- concatenate_1 (Concatenate) (None, 20) 0 dense_1[0][0]

- dense_4[0][0]

- ____________________________________________________________________________________________________

- dense_5 (Dense) (None, 1) 21 concatenate_1[0][0]

- ====================================================================================================

- Total params: 1,151

- Trainable params: 1,151

- Non-trainable params: 0

- ____________________________________________________________________________________________________

网络结构图如下:

五.多种输入输出模型

功能性API也可以用于开发具有多个输入(可能具有不同的模式)的更复杂的模型。它还可以用于开发产生多个输出的模型。我们将在本节中查看每个示例。

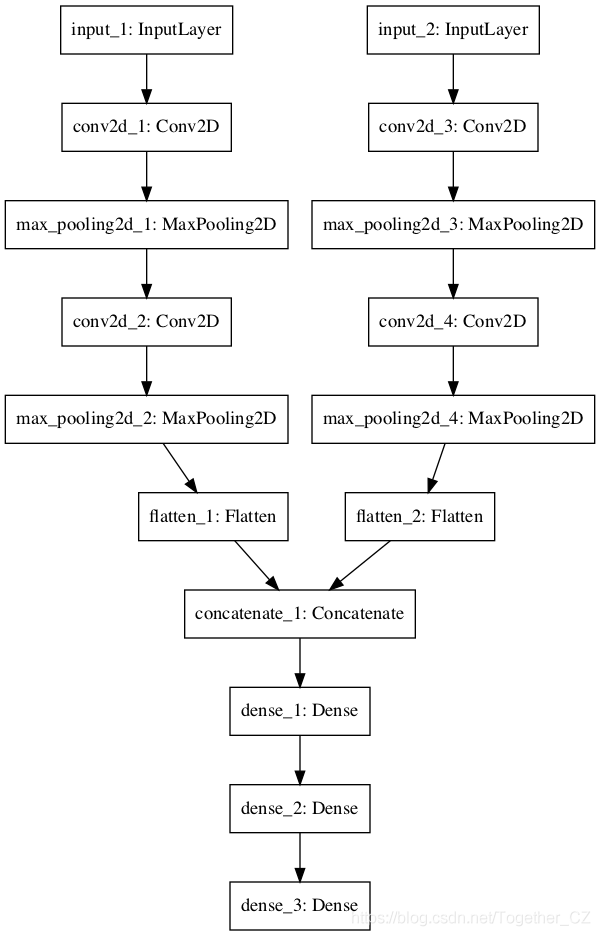

- 多输入模型

我们将开发一个图像分类模型,该模型将两个版本的图像作为输入,每个版本的大小不同。特别是黑白64×64版本和彩色32×32版本。分别对每个CNN模型进行特征提取,然后将两个模型的结果连接起来以进行解释和最终预测。

请注意,在创建Model()实例时,我们将两个输入层定义为一个数组。特别:

- model = Model(inputs=[visible1, visible2], outputoutputs=output)

实例如下:

- # Multiple Inputs

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- from keras.layers import Flatten

- from keras.layers.convolutional import Conv2D

- from keras.layers.pooling import MaxPooling2D

- from keras.layers.merge import concatenate

- # first input model

- visible1 = Input(shape=(64,64,1))

- conv11 = Conv2D(32, kernel_size=4, activation='relu')(visible1)

- pool11 = MaxPooling2D(pool_size=(2, 2))(conv11)

- conv12 = Conv2D(16, kernel_size=4, activation='relu')(pool11)

- pool12 = MaxPooling2D(pool_size=(2, 2))(conv12)

- flat1 = Flatten()(pool12)

- # second input model

- visible2 = Input(shape=(32,32,3))

- conv21 = Conv2D(32, kernel_size=4, activation='relu')(visible2)

- pool21 = MaxPooling2D(pool_size=(2, 2))(conv21)

- conv22 = Conv2D(16, kernel_size=4, activation='relu')(pool21)

- pool22 = MaxPooling2D(pool_size=(2, 2))(conv22)

- flat2 = Flatten()(pool22)

- # merge input models

- merge = concatenate([flat1, flat2])

- # interpretation model

- hidden1 = Dense(10, activation='relu')(merge)

- hidden2 = Dense(10, activation='relu')(hidden1)

- output = Dense(1, activation='sigmoid')(hidden2)

- model = Model(inputs=[visible1, visible2], outputoutputs=output)

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='multiple_inputs.png')

输出如下:

- ____________________________________________________________________________________________________

- Layer (type) Output Shape Param # Connected to

- ====================================================================================================

- input_1 (InputLayer) (None, 64, 64, 1) 0

- ____________________________________________________________________________________________________

- input_2 (InputLayer) (None, 32, 32, 3) 0

- ____________________________________________________________________________________________________

- conv2d_1 (Conv2D) (None, 61, 61, 32) 544 input_1[0][0]

- ____________________________________________________________________________________________________

- conv2d_3 (Conv2D) (None, 29, 29, 32) 1568 input_2[0][0]

- ____________________________________________________________________________________________________

- max_pooling2d_1 (MaxPooling2D) (None, 30, 30, 32) 0 conv2d_1[0][0]

- ____________________________________________________________________________________________________

- max_pooling2d_3 (MaxPooling2D) (None, 14, 14, 32) 0 conv2d_3[0][0]

- ____________________________________________________________________________________________________

- conv2d_2 (Conv2D) (None, 27, 27, 16) 8208 max_pooling2d_1[0][0]

- ____________________________________________________________________________________________________

- conv2d_4 (Conv2D) (None, 11, 11, 16) 8208 max_pooling2d_3[0][0]

- ____________________________________________________________________________________________________

- max_pooling2d_2 (MaxPooling2D) (None, 13, 13, 16) 0 conv2d_2[0][0]

- ____________________________________________________________________________________________________

- max_pooling2d_4 (MaxPooling2D) (None, 5, 5, 16) 0 conv2d_4[0][0]

- ____________________________________________________________________________________________________

- flatten_1 (Flatten) (None, 2704) 0 max_pooling2d_2[0][0]

- ____________________________________________________________________________________________________

- flatten_2 (Flatten) (None, 400) 0 max_pooling2d_4[0][0]

- ____________________________________________________________________________________________________

- concatenate_1 (Concatenate) (None, 3104) 0 flatten_1[0][0]

- flatten_2[0][0]

- ____________________________________________________________________________________________________

- dense_1 (Dense) (None, 10) 31050 concatenate_1[0][0]

- ____________________________________________________________________________________________________

- dense_2 (Dense) (None, 10) 110 dense_1[0][0]

- ____________________________________________________________________________________________________

- dense_3 (Dense) (None, 1) 11 dense_2[0][0]

- ====================================================================================================

- Total params: 49,699

- Trainable params: 49,699

- Non-trainable params: 0

- ____________________________________________________________________________________________________

网络结构图如下:

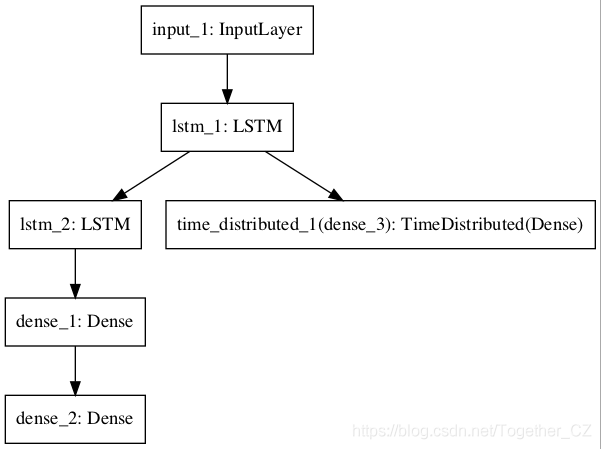

- 多输出模型

在本节中,我们将开发一个进行两种不同类型预测的模型。给定一个特征的100个时间步长的输入序列,该模型将对序列进行分类并输出具有相同长度的新序列。LSTM层解释输入序列,并为每个时间步返回隐藏状态。第一个输出模型创建堆叠的LSTM,解释特征,并进行二进制预测。第二个输出模型使用相同的输出层为每个输入时间步长进行实值预测。

- # Multiple Outputs

- from keras.utils import plot_model

- from keras.models import Model

- from keras.layers import Input

- from keras.layers import Dense

- from keras.layers.recurrent import LSTM

- from keras.layers.wrappers import TimeDistributed

- # input layer

- visible = Input(shape=(100,1))

- # feature extraction

- extract = LSTM(10, return_sequences=True)(visible)

- # classification output

- class11 = LSTM(10)(extract)

- class12 = Dense(10, activation='relu')(class11)

- output1 = Dense(1, activation='sigmoid')(class12)

- # sequence output

- output2 = TimeDistributed(Dense(1, activation='linear'))(extract)

- # output

- model = Model(inputs=visible, outputs=[output1, output2])

- # summarize layers

- print(model.summary())

- # plot graph

- plot_model(model, to_file='multiple_outputs.png')

输出如下:

- ____________________________________________________________________________________________________

- Layer (type) Output Shape Param # Connected to

- ====================================================================================================

- input_1 (InputLayer) (None, 100, 1) 0

- ____________________________________________________________________________________________________

- lstm_1 (LSTM) (None, 100, 10) 480 input_1[0][0]

- ____________________________________________________________________________________________________

- lstm_2 (LSTM) (None, 10) 840 lstm_1[0][0]

- ____________________________________________________________________________________________________

- dense_1 (Dense) (None, 10) 110 lstm_2[0][0]

- ____________________________________________________________________________________________________

- dense_2 (Dense) (None, 1) 11 dense_1[0][0]

- ____________________________________________________________________________________________________

- time_distributed_1 (TimeDistribu (None, 100, 1) 11 lstm_1[0][0]

- ====================================================================================================

- Total params: 1,452

- Trainable params: 1,452

- Non-trainable params: 0

- ____________________________________________________________________________________________________

网络结构图如下:

六.最佳做法

在本节中,我想向您提供一些技巧,以便在定义自己的模型时充分利用功能性API。

- 一致的变量名。对输入(可见)层和输出层(输出)甚至隐藏层(hidden1,hidden2)使用相同的变量名。它将有助于正确地将事物连接在一起。

- 查看图层摘要。始终打印模型摘要并查看图层输出,以确保模型按预期连接在一起。

- 查看图形图。始终创建模型图的图并进行检查,以确保将所有内容按预期组合在一起。

- 命名图层。您可以为查看模型图的摘要和绘图时使用的图层分配名称。例如:Dense(1,name =’hidden1')。

- 单独的子模型。考虑分离出子模型的开发,最后将子模型组合在一起。