小伙伴,我又来了,这次我们写的是用python爬虫爬取乌鲁木齐的房产数据并展示在地图上,地图工具我用的是 BDP个人版-免费在线数据分析软件,数据可视化软件 ,这个可以导入csv或者excel数据。

- 首先还是分析思路,爬取网站数据,获取小区名称,地址,价格,经纬度,保存在excel里。再把excel数据上传到BDP网站,生成地图报表

本次我使用的是scrapy框架,可能有点大材小用了,主要是刚学完用这个练练手,再写代码前我还是建议大家先分析网站,分析好数据,再去动手写代码,因为好的分析可以事半功倍,乌鲁木齐楼盘,2017乌鲁木齐新楼盘,乌鲁木齐楼盘信息 - 乌鲁木齐吉屋网 这个网站的数据比较全,每一页获取房产的LIST信息,并且翻页,点进去是详情页,获取房产的详细信息(包含名称,地址,房价,经纬度),再用pipelines保存item到excel里,最后在bdp生成地图报表,废话不多说上代码:

JiwuspiderSpider.py

- # -*- coding: utf-8 -*-

- from scrapy import Spider,Request

- import re

- from jiwu.items import JiwuItem

- class JiwuspiderSpider(Spider):

- name = "jiwuspider"

- allowed_domains = ["wlmq.jiwu.com"]

- start_urls = ['http://wlmq.jiwu.com/loupan']

- def parse(self, response):

- """

- 解析每一页房屋的list

- :param response:

- :return:

- """

- for url in response.xpath('//a[@class="index_scale"]/@href').extract():

- yield Request(url,self.parse_html) # 取list集合中的url 调用详情解析方法

- # 如果下一页属性还存在,则把下一页的url获取出来

- nextpage = response.xpath('//a[@class="tg-rownum-next index-icon"]/@href').extract_first()

- #判断是否为空

- if nextpage:

- yield Request(nextpage,self.parse) #回调自己继续解析

- def parse_html(self,response):

- """

- 解析每一个房产信息的详情页面,生成item

- :param response:

- :return:

- """

- pattern = re.compile('<script type="text/javascript">.*?lng = \'(.*?)\';.*?lat = \'(.*?)\';.*?bname = \'(.*?)\';.*?'

- 'address = \'(.*?)\';.*?price = \'(.*?)\';',re.S)

- item = JiwuItem()

- results = re.findall(pattern,response.text)

- for result in results:

- item['name'] = result[2]

- item['address'] = result[3]

- # 对价格判断只取数字,如果为空就设置为0

- pricestr =result[4]

- pattern2 = re.compile('(\d+)')

- s = re.findall(pattern2,pricestr)

- if len(s) == 0:

- item['price'] = 0

- else:item['price'] = s[0]

- item['lng'] = result[0]

- item['lat'] = result[1]

- yield item

item.py

- # -*- coding: utf-8 -*-

- # Define here the models for your scraped items

- #

- # See documentation in:

- # http://doc.scrapy.org/en/latest/topics/items.html

- import scrapy

- class JiwuItem(scrapy.Item):

- # define the fields for your item here like:

- name = scrapy.Field()

- price =scrapy.Field()

- address =scrapy.Field()

- lng = scrapy.Field()

- lat = scrapy.Field()

- pass

pipelines.py 注意此处是吧mongodb的保存方法注释了,可以自选选择保存方式

- # -*- coding: utf-8 -*-

- # Define your item pipelines here

- #

- # Don't forget to add your pipeline to the ITEM_PIPELINES setting

- # See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

- import pymongo

- from scrapy.conf import settings

- from openpyxl import workbook

- class JiwuPipeline(object):

- wb = workbook.Workbook()

- ws = wb.active

- ws.append(['小区名称', '地址', '价格', '经度', '纬度'])

- def __init__(self):

- # 获取数据库连接信息

- host = settings['MONGODB_URL']

- port = settings['MONGODB_PORT']

- dbname = settings['MONGODB_DBNAME']

- client = pymongo.MongoClient(host=host, port=port)

- # 定义数据库

- db = client[dbname]

- self.table = db[settings['MONGODB_TABLE']]

- def process_item(self, item, spider):

- jiwu = dict(item)

- #self.table.insert(jiwu)

- line = [item['name'], item['address'], str(item['price']), item['lng'], item['lat']]

- self.ws.append(line)

- self.wb.save('jiwu.xlsx')

- return item

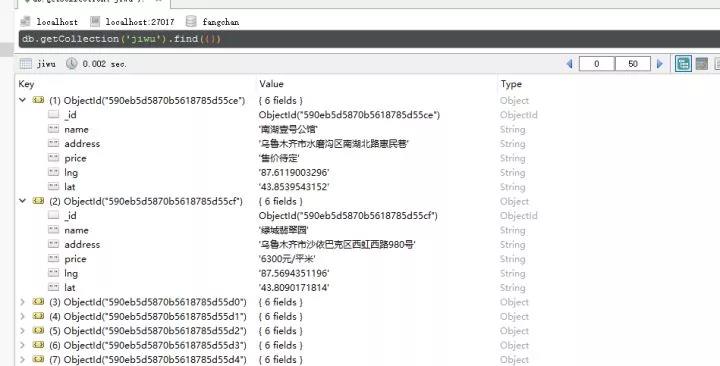

最后报表的数据

mongodb数据库

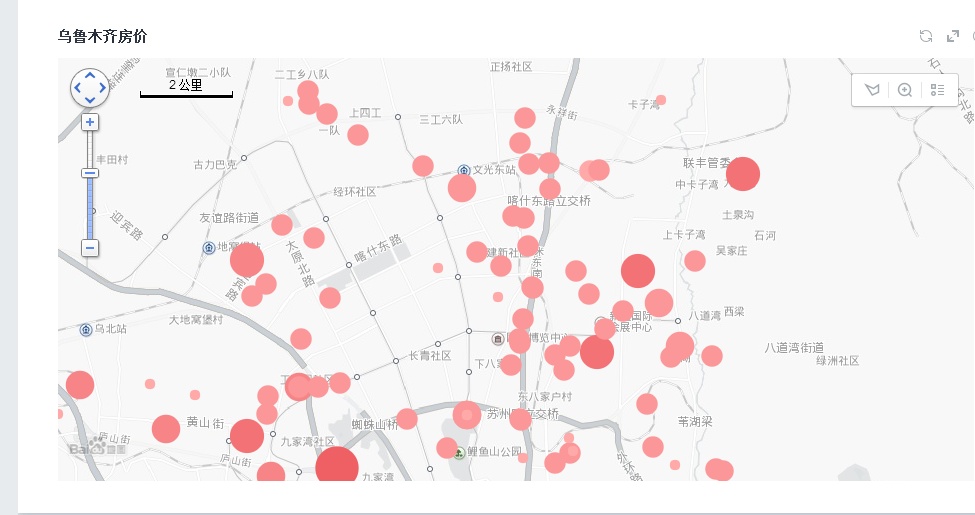

地图报表效果图:https://me.bdp.cn/share/index.html?shareId=sdo_b697418ff7dc4f928bb25e3ac1d52348