近日,背景调查公司 Onfido 研究主管 Peter Roelants 在 Medium 上发表了一篇题为《Higher-Level APIs in TensorFlow》的文章,通过实例详细介绍了如何使用 TensorFlow 中的高级 API(Estimator、Experiment 和 Dataset)训练模型。值得一提的是 Experiment 和 Dataset 可以独立使用。这些高级 API 已被***发布的 TensorFlow1.3 版收录。

TensorFlow 中有许多流行的库,如 Keras、TFLearn 和 Sonnet,它们可以让你轻松训练模型,而无需接触哪些低级别函数。目前,Keras API 正倾向于直接在 TensorFlow 中实现,TensorFlow 也在提供越来越多的高级构造,其中的一些已经被***发布的 TensorFlow1.3 版收录。

在本文中,我们将通过一个例子来学习如何使用一些高级构造,其中包括 Estimator、Experiment 和 Dataset。阅读本文需要预先了解有关 TensorFlow 的基本知识。

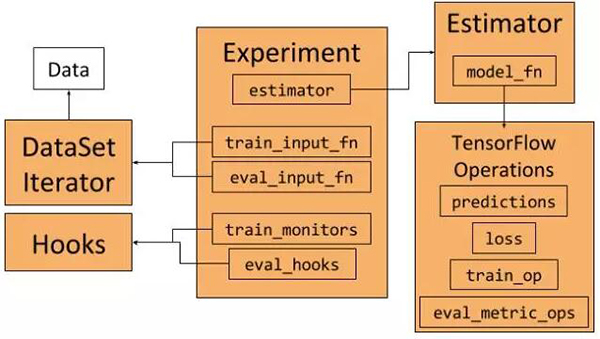

Experiment、Estimator 和 DataSet 框架和它们的相互作用(以下将对这些组件进行说明)

在本文中,我们使用 MNIST 作为数据集。它是一个易于使用的数据集,可以通过 TensorFlow 访问。你可以在这个 gist 中找到完整的示例代码。使用这些框架的一个好处是我们不需要直接处理图形和会话。

Estimator

Estimator(评估器)类代表一个模型,以及这些模型被训练和评估的方式。我们可以这样构建一个评估器:

- returntf.estimator.Estimator(

- model_fnmodel_fn=model_fn, # First-class function

- paramsparams=params, # HParams

- config=run_config # RunConfig

- )

为了构建一个 Estimator,我们需要传递一个模型函数,一个参数集合以及一些配置。

- 参数应该是模型超参数的集合,它可以是一个字典,但我们将在本示例中将其表示为 HParams 对象,用作 namedtuple。

- 该配置指定如何运行训练和评估,以及如何存出结果。这些配置通过 RunConfig 对象表示,该对象传达 Estimator 需要了解的关于运行模型的环境的所有内容。

- 模型函数是一个 Python 函数,它构建了给定输入的模型(见后文)。

模型函数

模型函数是一个 Python 函数,它作为***级函数传递给 Estimator。稍后我们就会看到,TensorFlow 也会在其他地方使用***级函数。模型表示为函数的好处在于模型可以通过实例化函数不断重新构建。该模型可以在训练过程中被不同的输入不断创建,例如:在训练期间运行验证测试。

模型函数将输入特征作为参数,相应标签作为张量。它还有一种模式来标记模型是否正在训练、评估或执行推理。模型函数的***一个参数是超参数的集合,它们与传递给 Estimator 的内容相同。模型函数需要返回一个 EstimatorSpec 对象——它会定义完整的模型。

EstimatorSpec 接受预测,损失,训练和评估几种操作,因此它定义了用于训练,评估和推理的完整模型图。由于 EstimatorSpec 采用常规 TensorFlow Operations,因此我们可以使用像 TF-Slim 这样的框架来定义自己的模型。

Experiment

Experiment(实验)类是定义如何训练模型,并将其与 Estimator 进行集成的方式。我们可以这样创建一个实验类:

- experiment = tf.contrib.learn.Experiment(

- estimatorestimator=estimator, # Estimator

- train_input_fntrain_input_fn=train_input_fn, # First-class function

- eval_input_fneval_input_fn=eval_input_fn, # First-class function

- train_steps=params.train_steps, # Minibatch steps

- min_eval_frequency=params.min_eval_frequency, # Eval frequency

- train_monitors=[train_input_hook], # Hooks for training

- eval_hooks=[eval_input_hook], # Hooks for evaluation

- eval_steps=None# Use evaluation feeder until its empty

- )

Experiment 作为输入:

- 一个 Estimator(例如上面定义的那个)。

- 训练和评估数据作为***级函数。这里用到了和前述模型函数相同的概念,通过传递函数而非操作,如有需要,输入图可以被重建。我们会在后面继续讨论这个概念。

- 训练和评估钩子(hooks)。这些钩子可以用于监视或保存特定内容,或在图形和会话中进行一些操作。例如,我们将通过操作来帮助初始化数据加载器。

- 不同参数解释了训练时间和评估时间。

一旦我们定义了 experiment,我们就可以通过 learn_runner.run 运行它来训练和评估模型:

- learn_runner.run(

- experiment_fnexperiment_fn=experiment_fn, # First-class function

- run_configrun_config=run_config, # RunConfig

- schedule="train_and_evaluate", # What to run

- hparams=params # HParams

- )

与模型函数和数据函数一样,函数中的学习运算符将创建 experiment 作为参数。

Dataset

我们将使用 Dataset 类和相应的 Iterator 来表示我们的训练和评估数据,并创建在训练期间迭代数据的数据馈送器。在本示例中,我们将使用 TensorFlow 中可用的 MNIST 数据,并在其周围构建一个 Dataset 包装器。例如,我们把训练的输入数据表示为:

- # Define the training inputs

- defget_train_inputs(batch_size, mnist_data):

- """Return the input function to get the training data.

- Args:

- batch_size (int): Batch size of training iterator that is returned

- by the input function.

- mnist_data (Object): Object holding the loaded mnist data.

- Returns:

- (Input function, IteratorInitializerHook):

- - Function that returns (features, labels) when called.

- - Hook to initialise input iterator.

- """

- iterator_initializer_hook = IteratorInitializerHook()

- deftrain_inputs():

- """Returns training set as Operations.

- Returns:

- (features, labels) Operations that iterate over the dataset

- on every evaluation

- """

- withtf.name_scope('Training_data'):

- # Get Mnist data

- images = mnist_data.train.images.reshape([-1, 28, 28, 1])

- labels = mnist_data.train.labels

- # Define placeholders

- images_placeholder = tf.placeholder(

- images.dtype, images.shape)

- labels_placeholder = tf.placeholder(

- labels.dtype, labels.shape)

- # Build dataset iterator

- dataset = tf.contrib.data.Dataset.from_tensor_slices(

- (images_placeholder, labels_placeholder))

- datasetdataset = dataset.repeat(None) # Infinite iterations

- datasetdataset = dataset.shuffle(buffer_size=10000)

- datasetdataset = dataset.batch(batch_size)

- iterator = dataset.make_initializable_iterator()

- next_example, next_label = iterator.get_next()

- # Set runhook to initialize iterator

- iterator_initializer_hook.iterator_initializer_func =

- lambdasess: sess.run(

- iterator.initializer,

- feed_dict={images_placeholder: images,

- labels_placeholder: labels})

- # Return batched (features, labels)

- returnnext_example, next_label

- # Return function and hook

- returntrain_inputs, iterator_initializer_hook

调用这个 get_train_inputs 会返回一个一级函数,它在 TensorFlow 图中创建数据加载操作,以及一个 Hook 初始化迭代器。

本示例中,我们使用的 MNIST 数据最初表示为 Numpy 数组。我们创建一个占位符张量来获取数据,再使用占位符来避免数据被复制。接下来,我们在 from_tensor_slices 的帮助下创建一个切片数据集。我们将确保该数据集运行***长时间(experiment 可以考虑 epoch 的数量),让数据得到清晰,并分成所需的尺寸。

为了迭代数据,我们需要在数据集的基础上创建迭代器。因为我们正在使用占位符,所以我们需要在 NumPy 数据的相关会话中初始化占位符。我们可以通过创建一个可初始化的迭代器来实现。创建图形时,我们将创建一个自定义的 IteratorInitializerHook 对象来初始化迭代器:

- classIteratorInitializerHook(tf.train.SessionRunHook):

- """Hook to initialise data iterator after Session is created."""

- def__init__(self):

- super(IteratorInitializerHook, self).__init__()

- self.iterator_initializer_func = None

- defafter_create_session(self, session, coord):

- """Initialise the iterator after the session has been created."""

- self.iterator_initializer_func(session)

IteratorInitializerHook 继承自 SessionRunHook。一旦创建了相关会话,这个钩子就会调用 call after_create_session,并用正确的数据初始化占位符。这个钩子会通过 get_train_inputs 函数返回,并在创建时传递给 Experiment 对象。

train_inputs 函数返回的数据加载操作是 TensorFlow 操作,每次评估时都会返回一个新的批处理。

运行代码

现在我们已经定义了所有的东西,我们可以用以下命令运行代码:

- python mnist_estimator.py --model_dir ./mnist_training --data_dir ./mnist_data

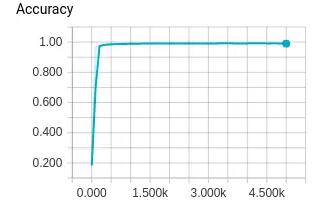

如果你不传递参数,它将使用文件顶部的默认标志来确定保存数据和模型的位置。训练将在终端输出全局步长、损失、精度等信息。除此之外,实验和估算器框架将记录 TensorBoard 可以显示的某些统计信息。如果我们运行:

- tensorboard --logdir='./mnist_training'

我们就可以看到所有训练统计数据,如训练损失、评估准确性、每步时间和模型图。

评估精度在 TensorBoard 中的可视化

在 TensorFlow 中,有关 Estimator、Experiment 和 Dataset 框架的示例很少,这也是本文存在的原因。希望这篇文章可以向大家介绍这些架构工作的原理,它们应该采用哪些抽象方法,以及如何使用它们。如果你对它们很感兴趣,以下是其他相关文档。

关于 Estimator、Experiment 和 Dataset 的注释

- 论文《TensorFlow Estimators: Managing Simplicity vs. Flexibility in High-Level Machine Learning Frameworks》:https://terrytangyuan.github.io/data/papers/tf-estimators-kdd-paper.pdf

- Using the Dataset API for TensorFlow Input Pipelines:https://www.tensorflow.org/versions/r1.3/programmers_guide/datasets

- tf.estimator.Estimator:https://www.tensorflow.org/api_docs/python/tf/estimator/Estimator

- tf.contrib.learn.RunConfig:https://www.tensorflow.org/api_docs/python/tf/contrib/learn/RunConfig

- tf.estimator.DNNClassifier:https://www.tensorflow.org/api_docs/python/tf/estimator/DNNClassifier

- tf.estimator.DNNRegressor:https://www.tensorflow.org/api_docs/python/tf/estimator/DNNRegressor

- Creating Estimators in tf.estimator:https://www.tensorflow.org/extend/estimators

- tf.contrib.learn.Head:https://www.tensorflow.org/api_docs/python/tf/contrib/learn/Head

- 本文用到的 Slim 框架:https://github.com/tensorflow/models/tree/master/slim

完整示例

- """ to illustrate usage of tf.estimator.Estimator in TF v1.3"""

- importtensorflow astf

- fromtensorflow.examples.tutorials.mnist importinput_data asmnist_data

- fromtensorflow.contrib importslim

- fromtensorflow.contrib.learn importModeKeys

- fromtensorflow.contrib.learn importlearn_runner

- # Show debugging output

- tf.logging.set_verbosity(tf.logging.DEBUG)

- # Set default flags for the output directories

- FLAGS = tf.app.flags.FLAGS

- tf.app.flags.DEFINE_string(

- flag_name='model_dir', default_value='./mnist_training',

- docstring='Output directory for model and training stats.')

- tf.app.flags.DEFINE_string(

- flag_name='data_dir', default_value='./mnist_data',

- docstring='Directory to download the data to.')

- # Define and run experiment ###############################

- defrun_experiment(argv=None):

- """Run the training experiment."""

- # Define model parameters

- params = tf.contrib.training.HParams(

- learning_rate=0.002,

- n_classes=10,

- train_steps=5000,

- min_eval_frequency=100

- )

- # Set the run_config and the directory to save the model and stats

- run_config = tf.contrib.learn.RunConfig()

- run_configrun_config = run_config.replace(model_dir=FLAGS.model_dir)

- learn_runner.run(

- experiment_fnexperiment_fn=experiment_fn, # First-class function

- run_configrun_config=run_config, # RunConfig

- schedule="train_and_evaluate", # What to run

- hparams=params # HParams

- )

- defexperiment_fn(run_config, params):

- """Create an experiment to train and evaluate the model.

- Args:

- run_config (RunConfig): Configuration for Estimator run.

- params (HParam): Hyperparameters

- Returns:

- (Experiment) Experiment for training the mnist model.

- """

- # You can change a subset of the run_config properties as

- run_configrun_config = run_config.replace(

- save_checkpoints_steps=params.min_eval_frequency)

- # Define the mnist classifier

- estimator = get_estimator(run_config, params)

- # Setup data loaders

- mnist = mnist_data.read_data_sets(FLAGS.data_dir, one_hot=False)

- train_input_fn, train_input_hook = get_train_inputs(

- batch_size=128, mnistmnist_data=mnist)

- eval_input_fn, eval_input_hook = get_test_inputs(

- batch_size=128, mnistmnist_data=mnist)

- # Define the experiment

- experiment = tf.contrib.learn.Experiment(

- estimatorestimator=estimator, # Estimator

- train_input_fntrain_input_fn=train_input_fn, # First-class function

- eval_input_fneval_input_fn=eval_input_fn, # First-class function

- train_steps=params.train_steps, # Minibatch steps

- min_eval_frequency=params.min_eval_frequency, # Eval frequency

- train_monitors=[train_input_hook], # Hooks for training

- eval_hooks=[eval_input_hook], # Hooks for evaluation

- eval_steps=None# Use evaluation feeder until its empty

- )

- returnexperiment

- # Define model ############################################

- defget_estimator(run_config, params):

- """Return the model as a Tensorflow Estimator object.

- Args:

- run_config (RunConfig): Configuration for Estimator run.

- params (HParams): hyperparameters.

- """

- returntf.estimator.Estimator(

- model_fnmodel_fn=model_fn, # First-class function

- paramsparams=params, # HParams

- config=run_config # RunConfig

- )

- defmodel_fn(features, labels, mode, params):

- """Model function used in the estimator.

- Args:

- features (Tensor): Input features to the model.

- labels (Tensor): Labels tensor for training and evaluation.

- mode (ModeKeys): Specifies if training, evaluation or prediction.

- params (HParams): hyperparameters.

- Returns:

- (EstimatorSpec): Model to be run by Estimator.

- """

- is_training = mode == ModeKeys.TRAIN

- # Define model's architecture

- logits = architecture(features, is_trainingis_training=is_training)

- predictions = tf.argmax(logits, axis=-1)

- # Loss, training and eval operations are not needed during inference.

- loss = None

- train_op = None

- eval_metric_ops = {}

- ifmode != ModeKeys.INFER:

- loss = tf.losses.sparse_softmax_cross_entropy(

- labels=tf.cast(labels, tf.int32),

- logitslogits=logits)

- train_op = get_train_op_fn(loss, params)

- eval_metric_ops = get_eval_metric_ops(labels, predictions)

- returntf.estimator.EstimatorSpec(

- modemode=mode,

- predictionspredictions=predictions,

- lossloss=loss,

- train_optrain_op=train_op,

- eval_metric_opseval_metric_ops=eval_metric_ops

- )

- defget_train_op_fn(loss, params):

- """Get the training Op.

- Args:

- loss (Tensor): Scalar Tensor that represents the loss function.

- params (HParams): Hyperparameters (needs to have `learning_rate`)

- Returns:

- Training Op

- """

- returntf.contrib.layers.optimize_loss(

- lossloss=loss,

- global_step=tf.contrib.framework.get_global_step(),

- optimizer=tf.train.AdamOptimizer,

- learning_rate=params.learning_rate

- )

- defget_eval_metric_ops(labels, predictions):

- """Return a dict of the evaluation Ops.

- Args:

- labels (Tensor): Labels tensor for training and evaluation.

- predictions (Tensor): Predictions Tensor.

- Returns:

- Dict of metric results keyed by name.

- """

- return{

- 'Accuracy': tf.metrics.accuracy(

- labelslabels=labels,

- predictionspredictions=predictions,

- name='accuracy')

- }

- defarchitecture(inputs, is_training, scope='MnistConvNet'):

- """Return the output operation following the network architecture.

- Args:

- inputs (Tensor): Input Tensor

- is_training (bool): True iff in training mode

- scope (str): Name of the scope of the architecture

- Returns:

- Logits output Op for the network.

- """

- withtf.variable_scope(scope):

- withslim.arg_scope(

- [slim.conv2d, slim.fully_connected],

- weights_initializer=tf.contrib.layers.xavier_initializer()):

- net = slim.conv2d(inputs, 20, [5, 5], padding='VALID',

- scope='conv1')

- net = slim.max_pool2d(net, 2, stride=2, scope='pool2')

- net = slim.conv2d(net, 40, [5, 5], padding='VALID',

- scope='conv3')

- net = slim.max_pool2d(net, 2, stride=2, scope='pool4')

- net = tf.reshape(net, [-1, 4* 4* 40])

- net = slim.fully_connected(net, 256, scope='fn5')

- net = slim.dropout(net, is_trainingis_training=is_training,

- scope='dropout5')

- net = slim.fully_connected(net, 256, scope='fn6')

- net = slim.dropout(net, is_trainingis_training=is_training,

- scope='dropout6')

- net = slim.fully_connected(net, 10, scope='output',

- activation_fn=None)

- returnnet

- # Define data loaders #####################################

- classIteratorInitializerHook(tf.train.SessionRunHook):

- """Hook to initialise data iterator after Session is created."""

- def__init__(self):

- super(IteratorInitializerHook, self).__init__()

- self.iterator_initializer_func = None

- defafter_create_session(self, session, coord):

- """Initialise the iterator after the session has been created."""

- self.iterator_initializer_func(session)

- # Define the training inputs

- defget_train_inputs(batch_size, mnist_data):

- """Return the input function to get the training data.

- Args:

- batch_size (int): Batch size of training iterator that is returned

- by the input function.

- mnist_data (Object): Object holding the loaded mnist data.

- Returns:

- (Input function, IteratorInitializerHook):

- - Function that returns (features, labels) when called.

- - Hook to initialise input iterator.

- """

- iterator_initializer_hook = IteratorInitializerHook()

- deftrain_inputs():

- """Returns training set as Operations.

- Returns:

- (features, labels) Operations that iterate over the dataset

- on every evaluation

- """

- withtf.name_scope('Training_data'):

- # Get Mnist data

- images = mnist_data.train.images.reshape([-1, 28, 28, 1])

- labels = mnist_data.train.labels

- # Define placeholders

- images_placeholder = tf.placeholder(

- images.dtype, images.shape)

- labels_placeholder = tf.placeholder(

- labels.dtype, labels.shape)

- # Build dataset iterator

- dataset = tf.contrib.data.Dataset.from_tensor_slices(

- (images_placeholder, labels_placeholder))

- datasetdataset = dataset.repeat(None) # Infinite iterations

- datasetdataset = dataset.shuffle(buffer_size=10000)

- datasetdataset = dataset.batch(batch_size)

- iterator = dataset.make_initializable_iterator()

- next_example, next_label = iterator.get_next()

- # Set runhook to initialize iterator

- iterator_initializer_hook.iterator_initializer_func =

- lambdasess: sess.run(

- iterator.initializer,

- feed_dict={images_placeholder: images,

- labels_placeholder: labels})

- # Return batched (features, labels)

- returnnext_example, next_label

- # Return function and hook

- returntrain_inputs, iterator_initializer_hook

- defget_test_inputs(batch_size, mnist_data):

- """Return the input function to get the test data.

- Args:

- batch_size (int): Batch size of training iterator that is returned

- by the input function.

- mnist_data (Object): Object holding the loaded mnist data.

- Returns:

- (Input function, IteratorInitializerHook):

- - Function that returns (features, labels) when called.

- - Hook to initialise input iterator.

- """

- iterator_initializer_hook = IteratorInitializerHook()

- deftest_inputs():

- """Returns training set as Operations.

- Returns:

- (features, labels) Operations that iterate over the dataset

- on every evaluation

- """

- withtf.name_scope('Test_data'):

- # Get Mnist data

- images = mnist_data.test.images.reshape([-1, 28, 28, 1])

- labels = mnist_data.test.labels

- # Define placeholders

- images_placeholder = tf.placeholder(

- images.dtype, images.shape)

- labels_placeholder = tf.placeholder(

- labels.dtype, labels.shape)

- # Build dataset iterator

- dataset = tf.contrib.data.Dataset.from_tensor_slices(

- (images_placeholder, labels_placeholder))

- datasetdataset = dataset.batch(batch_size)

- iterator = dataset.make_initializable_iterator()

- next_example, next_label = iterator.get_next()

- # Set runhook to initialize iterator

- iterator_initializer_hook.iterator_initializer_func =

- lambdasess: sess.run(

- iterator.initializer,

- feed_dict={images_placeholder: images,

- labels_placeholder: labels})

- returnnext_example, next_label

- # Return function and hook

- returntest_inputs, iterator_initializer_hook

- # Run ##############################################

- if__name__ == "__main__":

- tf.app.run(

- main=run_experiment

- )

推理训练模式

在训练模型后,我们可以运行 estimateator.predict 来预测给定图像的类别。可使用以下代码示例。

- """ to illustrate inference of a trained tf.estimator.Estimator.

- NOTE: This is dependent on mnist_estimator.py which defines the model.

- mnist_estimator.py can be found at:

- https://gist.github.com/peterroelants/9956ec93a07ca4e9ba5bc415b014bcca

- """

- importnumpy asnp

- importskimage.io

- importtensorflow astf

- frommnist_estimator importget_estimator

- # Set default flags for the output directories

- FLAGS =tf.app.flags.FLAGS

- tf.app.flags.DEFINE_string(

- flag_name='saved_model_dir',default_value='./mnist_training',

- docstring='Output directory for model and training stats.')

- # MNIST sample images

- IMAGE_URLS =[

- 'https://i.imgur.com/SdYYBDt.png',# 0

- 'https://i.imgur.com/Wy7mad6.png',# 1

- 'https://i.imgur.com/nhBZndj.png',# 2

- 'https://i.imgur.com/V6XeoWZ.png',# 3

- 'https://i.imgur.com/EdxBM1B.png',# 4

- 'https://i.imgur.com/zWSDIuV.png',# 5

- 'https://i.imgur.com/Y28rZho.png',# 6

- 'https://i.imgur.com/6qsCz2W.png',# 7

- 'https://i.imgur.com/BVorzCP.png',# 8

- 'https://i.imgur.com/vt5Edjb.png',# 9

- ]

- definfer(argv=None):

- """Run the inference and print the results to stdout."""

- params =tf.contrib.training.HParams()# Empty hyperparameters

- # Set the run_config where to load the model from

- run_config =tf.contrib.learn.RunConfig()

- run_configrun_config =run_config.replace(model_dir=FLAGS.saved_model_dir)

- # Initialize the estimator and run the prediction

- estimator =get_estimator(run_config,params)

- result =estimator.predict(input_fn=test_inputs)

- forr inresult:

- print(r)

- deftest_inputs():

- """Returns training set as Operations.

- Returns:

- (features, ) Operations that iterate over the test set.

- """

- withtf.name_scope('Test_data'):

- images =tf.constant(load_images(),dtype=np.float32)

- dataset =tf.contrib.data.Dataset.from_tensor_slices((images,))

- # Return as iteration in batches of 1

- returndataset.batch(1).make_one_shot_iterator().get_next()

- defload_images():

- """Load MNIST sample images from the web and return them in an array.

- Returns:

- Numpy array of size (10, 28, 28, 1) with MNIST sample images.

- """

- images =np.zeros((10,28,28,1))

- foridx,url inenumerate(IMAGE_URLS):

- images[idx,:,:,0]=skimage.io.imread(url)

- returnimages

- # Run ##############################################

- if__name__ =="__main__":

- tf.app.run(main=infer)

原文:https://medium.com/onfido-tech/higher-level-apis-in-tensorflow-67bfb602e6c0

【本文是51CTO专栏机构“机器之心”的原创译文,微信公众号“机器之心( id: almosthuman2014)”】