1、FAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate org

问题:

起因是我重装了mysql数据库。

安装之后 把访问权限都配置好 :

- GRANT ALL PRIVILEGES ON*.* TO 'hive'@'%' Identified by 'hive';

- GRANT ALL PRIVILEGES ON*.* TO 'hive'@'localhost' Identified by 'hive';

- GRANT ALL PRIVILEGES ON*.* TO 'hive'@'127.0.0.1' Identified by 'hive';

本机地址: 192.168.103.43 机器名字:192-168-103-43

- flush privileges;

启动hive 发生下面的错误:

- hive> show tables;FAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.metastore.HiveMetaStoreClient

- FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTaskFAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.metastore.HiveMetaStoreClientFAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask

- cd ${HIVE_HOME}/bin

- ./hive -hiveconf hive.root.logger=DEBUG,console

- hive> show tables;

得到如下的错误信息(当然 不同的问题所产生的日志是不同的):

- Caused by: javax.jdo.JDOFatalDataStoreException: Access denied for user 'hive'@'192-168-103-43' (using password: YES)

- NestedThrowables:

- java.sql.SQLException: Access denied for user 'hive'@'192-168-103-43' (using password: YES)

- at org.datanucleus.jdo.NucleusJDOHelper.getJDOExceptionForNucleusException(NucleusJDOHelper.java:298)

- at org.datanucleus.jdo.JDOPersistenceManagerFactory.freezeConfiguration(JDOPersistenceManagerFactory.java:601)

- at org.datanucleus.jdo.JDOPersistenceManagerFactory.createPersistenceManagerFactory(JDOPersistenceManagerFactory.java:286)

- at org.datanucleus.jdo.JDOPersistenceManagerFactory.getPersistenceManagerFactory(JDOPersistenceManagerFactory.java:182)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

- at java.lang.reflect.Method.invoke(Method.java:597)

- at javax.jdo.JDOHelper$16.run(JDOHelper.java:1958)

- at java.security.AccessController.doPrivileged(Native Method)

- at javax.jdo.JDOHelper.invoke(JDOHelper.java:1953)

- at javax.jdo.JDOHelper.invokeGetPersistenceManagerFactoryOnImplementation(JDOHelper.java:1159)

- at javax.jdo.JDOHelper.getPersistenceManagerFactory(JDOHelper.java:803)

- at javax.jdo.JDOHelper.getPersistenceManagerFactory(JDOHelper.java:698)

- at org.apache.hadoop.hive.metastore.ObjectStore.getPMF(ObjectStore.java:262)

- at org.apache.hadoop.hive.metastore.ObjectStore.getPersistenceManager(ObjectStore.java:291)

- at org.apache.hadoop.hive.metastore.ObjectStore.initialize(ObjectStore.java:224)

- at org.apache.hadoop.hive.metastore.ObjectStore.setConf(ObjectStore.java:199)

- at org.apache.hadoop.util.ReflectionUtils.setConf(ReflectionUtils.java:62)

- at org.apache.hadoop.util.ReflectionUtils.newInstance(ReflectionUtils.java:117)

- at org.apache.hadoop.hive.metastore.RetryingRawStore.<init>(RetryingRawStore.java:62)

- at org.apache.hadoop.hive.metastore.RetryingRawStore.getProxy(RetryingRawStore.java:71)

- at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.newRawStore(HiveMetaStore.java:413)

- at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.getMS(HiveMetaStore.java:401)

- at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.createDefaultDB(HiveMetaStore.java:439)

- at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.init(HiveMetaStore.java:325)

- at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.<init>(HiveMetaStore.java:285)

- at org.apache.hadoop.hive.metastore.RetryingHMSHandler.<init>(RetryingHMSHandler.java:53)

- at org.apache.hadoop.hive.metastore.RetryingHMSHandler.getProxy(RetryingHMSHandler.java:58)

- at org.apache.hadoop.hive.metastore.HiveMetaStore.newHMSHandler(HiveMetaStore.java:4102)

- at org.apache.hadoop.hive.metastore.HiveMetaStoreClient.<init>(HiveMetaStoreClient.java:121)

- ... 28 more

原因:

发现数据库的权限 HIVE需要的是

'hive'@'192-168-103-43' 这个IP地址

解决:

然后试着在mysql中加上权限:

- GRANT ALL PRIVILEGES ON*.* TO 'hive'@'192-168-103-43' Identified by 'hive';

- flush privileges;

再次登录hive

- hive> show tables;

OK

2、Hive出现异常 FAILED: Error In Metadata: Java.Lang.RuntimeException: Unable To Instan

问题:

在公司的虚拟机上运行hive计算,因为要计算的数据量较大,频繁,导致了服务器负载过高,mysql也出现无法连接的问题,最后虚拟机出现The remote system refused the connection.重启虚拟机后,进入hive。

- hive> show tables;

出现了下面的问题:

- FAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.metastore.HiveMetaStoreClient

- FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTaskFAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.metastore.HiveMetaStoreClientFAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask

原因:

用下面的命令,重新启动hive

- ./hive -hiveconf hive.root.logger=DEBUG,console

- hive> show tables;

能够看到更深层次的原因的是:

- Caused by: java.lang.reflect.InvocationTargetException

- at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

- at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:39)

- at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:27)

- at java.lang.reflect.Constructor.newInstance(Constructor.java:513)

- at org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1076)

- … 23 more

- Caused by: javax.jdo.JDODataStoreException: Exception thrown obtaining schema column information from datastore

- NestedThrowables:

- com.mysql.jdbc.exceptions.jdbc4.MySQLSyntaxErrorException: Table ‘hive.DELETEME1370713761025′ doesn’t exist

根据提示的信息,登陆mysql或者mysql客户端查看hive的数据库的表信息

- mysql -u root -p

- mysql> use hive;

- mysql> show tables;

- +—————————+

- | Tables_in_hive |

- +—————————+

- | BUCKETING_COLS |

- | CDS |

- | COLUMNS_V2 |

- | DATABASE_PARAMS |

- | DBS |

- | DELETEME1370677637267 |

- | DELETEME1370712928271 |

- | DELETEME1370713342355 |

- | DELETEME1370713589772 |

- | DELETEME1370713761025 |

- | DELETEME1370713792915 |

- | IDXS |

- | INDEX_PARAMS |

- | PARTITIONS |

- | PARTITION_KEYS |

- | PARTITION_KEY_VALS |

- | PARTITION_PARAMS |

- | PART_COL_PRIVS |

- | PART_COL_STATS |

- | PART_PRIVS |

- | SDS |

- | SD_PARAMS |

- | SEQUENCE_TABLE |

- | SERDES |

- | SERDE_PARAMS |

- | SKEWED_COL_NAMES |

- | SKEWED_COL_VALUE_LOC_MAP |

- | SKEWED_STRING_LIST |

- | SKEWED_STRING_LIST_VALUES |

- | SKEWED_VALUES |

- | SORT_COLS |

- | TABLE_PARAMS |

- | TAB_COL_STATS |

- | TBLS |

- | TBL_COL_PRIVS |

- | TBL_PRIVS |

- +—————————+

- 36 rows in set (0.00 sec)

能够看到“DELETEME1370713792915”这个表,问题明确了,由于计算的压力过大,服务器停止响应,mysql也停止了响应,mysql进程被异常终止,在运行中的mysql表数据异常,hive的元数据表异常。

解决问题的办法有两个:

1.直接在mysql中drop 异常提示中的table;

mysql>drop table DELETEME1370713761025;

2.保守的做法,根据DELETEME*表的结构,创建不存在的表

CREATE TABLE `DELETEME1370713792915` ( `UNUSED` int(11) NOT NULL ) ENGINE=InnoDB DEFAULT CHARSET=latin1;

通过实践,第一个方法就能够解决问题,如果不行可以尝试第二个方法。

3、hive错误show tables无法使用 : Unable to instantiate rg.apache.hadoop.hive.metastore.

问题:

hive异常show tables无法使用:

Unable to instantiate rg.apache.hadoop.hive.metastore.HiveMetaStoreClient

异常:

- hive> show tables;

- FAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate rg.apache.hadoop.hive.metastore.HiveMetaStoreClient

- FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask

原因:

在其他shell 开了hive 没有关闭

解决:

使用 ps -ef | grep hive

kill -9

杀死进程

4、FAILED: Error in metadata: java.lang.RuntimeException: Unable to in(2)(08-52-23)

问题:安装配置Hive时报错:

- FAILED: Error in metadata: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.metastore.HiveMetaStoreClient

- FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask

用调试模式报错如下:

- [root@hadoop1 bin]# hive -hiveconf hive.root.logger=DEBUG,console

- 13/10/09 16:16:27 DEBUG common.LogUtils: Using hive-site.xml found on CLASSPATH at /opt/hive-0.11.0/conf/hive-site.xml

- 13/10/09 16:16:27 DEBUG conf.Configuration: java.io.IOException: config()

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:227)

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:214)

- at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:1039)

- at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:636)

- at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:614)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

- at java.lang.reflect.Method.invoke(Method.java:597)

- at org.apache.hadoop.util.RunJar.main(RunJar.java:156)

- 13/10/09 16:16:27 DEBUG conf.Configuration: java.io.IOException: config()

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:227)

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:214)

- at org.apache.hadoop.mapred.JobConf.<init>(JobConf.java:330)

- at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java:1073)

- at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:1040)

- at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:636)

- at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:614)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

- at java.lang.reflect.Method.invoke(Method.java:597)

- at org.apache.hadoop.util.RunJar.main(RunJar.java:156)

- Logging initialized using configuration in file:/opt/hive-0.11.0/conf/hive-log4j.properties

- 13/10/09 16:16:27 INFO SessionState:

- Logging initialized using configuration in file:/opt/hive-0.11.0/conf/hive-log4j.properties

- 13/10/09 16:16:27 DEBUG parse.VariableSubstitution: Substitution is on: hive

- Hive history file=/tmp/root/hive_job_log_root_4666@hadoop1_201310091616_1069706211.txt

- 13/10/09 16:16:27 INFO exec.HiveHistory: Hive history file=/tmp/root/hive_job_log_root_4666@hadoop1_201310091616_1069706211.txt

- 13/10/09 16:16:27 DEBUG conf.Configuration: java.io.IOException: config()

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:227)

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:214)

- at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(UserGroupInformation.java:187)

- at org.apache.hadoop.security.UserGroupInformation.isSecurityEnabled(UserGroupInformation.java:239)

- at org.apache.hadoop.security.UserGroupInformation.getLoginUser(UserGroupInformation.java:438)

- at org.apache.hadoop.security.UserGroupInformation.getCurrentUser(UserGroupInformation.java:424)

- at org.apache.hadoop.hive.shims.HadoopShimsSecure.getUGIForConf(HadoopShimsSecure.java:491)

- at org.apache.hadoop.hive.ql.security.HadoopDefaultAuthenticator.setConf(HadoopDefaultAuthenticator.java:51)

- at org.apache.hadoop.util.ReflectionUtils.setConf(ReflectionUtils.java:62)

- at org.apache.hadoop.util.ReflectionUtils.newInstance(ReflectionUtils.java:117)

- at org.apache.hadoop.hive.ql.metadata.HiveUtils.getAuthenticator(HiveUtils.java:365)

- at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:270)

- at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:670)

- at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:614)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

- at java.lang.reflect.Method.invoke(Method.java:597)

- at org.apache.hadoop.util.RunJar.main(RunJar.java:156)

- 13/10/09 16:16:27 DEBUG security.Groups: Creating new Groups object

- 13/10/09 16:16:27 DEBUG security.Groups: Group mapping impl=org.apache.hadoop.security.ShellBasedUnixGroupsMapping; cacheTimeout=300000

- 13/10/09 16:16:27 DEBUG security.UserGroupInformation: hadoop login

- 13/10/09 16:16:27 DEBUG security.UserGroupInformation: hadoop login commit

- 13/10/09 16:16:27 DEBUG security.UserGroupInformation: using local user:UnixPrincipal锛?root

- 13/10/09 16:16:27 DEBUG security.UserGroupInformation: UGI loginUser:root

- 13/10/09 16:16:27 DEBUG security.Groups: Returning fetched groups for 'root'

- 13/10/09 16:16:27 DEBUG security.Groups: Returning cached groups for 'root'

- 13/10/09 16:16:27 DEBUG conf.Configuration: java.io.IOException: config(config)

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:260)

- at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:1044)

- at org.apache.hadoop.hive.ql.security.authorization.DefaultHiveAuthorizationProvider.init(DefaultHiveAuthorizationProvider.java:30)

- at org.apache.hadoop.hive.ql.security.authorization.HiveAuthorizationProviderBase.setConf(HiveAuthorizationProviderBase.java:108)

- at org.apache.hadoop.util.ReflectionUtils.setConf(ReflectionUtils.java:62)

- at org.apache.hadoop.util.ReflectionUtils.newInstance(ReflectionUtils.java:117)

- at org.apache.hadoop.hive.ql.metadata.HiveUtils.getAuthorizeProviderManager(HiveUtils.java:339)

- at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:272)

- at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:670)

- at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:614)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

- at java.lang.reflect.Method.invoke(Method.java:597)

- at org.apache.hadoop.util.RunJar.main(RunJar.java:156)

- 13/10/09 16:16:27 DEBUG conf.Configuration: java.io.IOException: config()

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:227)

- at org.apache.hadoop.conf.Configuration.<init>(Configuration.java:214)

- at org.apache.hadoop.mapred.JobConf.<init>(JobConf.java:330)

- at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java:1073)

- at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:1045)

- at org.apache.hadoop.hive.ql.security.authorization.DefaultHiveAuthorizationProvider.init(DefaultHiveAuthorizationProvider.java:30)

- at org.apache.hadoop.hive.ql.security.authorization.HiveAuthorizationProviderBase.setConf(HiveAuthorizationProviderBase.java:108)

- at org.apache.hadoop.util.ReflectionUtils.setConf(ReflectionUtils.java:62)

- at org.apache.hadoop.util.ReflectionUtils.newInstance(ReflectionUtils.java:117)

- at org.apache.hadoop.hive.ql.metadata.HiveUtils.getAuthorizeProviderManager(HiveUtils.java:339)

- at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:272)

- at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:670)

- at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:614)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

- at java.lang.reflect.Method.invoke(Method.java:597)

- at org.apache.hadoop.util.RunJar.main(RunJar.java:156)

原因:

这个错误应该是你集成了mysql,从而报错

解决:

修改hive-site.xml,参照:

- <property>

- <name>javax.jdo.option.ConnectionURL</name>

- <value>jdbc:mysql://192.168.1.101:3306/hive?createDatabaseIfNotExist=true</value>

- <description>JDBC connect string for a JDBC metastore</description>

- </property>

5、Hive的--auxpath使用相对路径遇到的一个奇怪的异常

问题:

在使用Hive的--auxpath过程中,如果我使用的是相对路径(例如,--auxpath=abc.jar),会产生下面的一个异常:

- java.lang.IllegalArgumentException: Can not create a Path from an empty string

- at org.apache.hadoop.fs.Path.checkPathArg(Path.java:91)

- at org.apache.hadoop.fs.Path.<init>(Path.java:99)

- at org.apache.hadoop.fs.Path.<init>(Path.java:58)

- at org.apache.hadoop.mapred.JobClient.copyRemoteFiles(JobClient.java:619)

- at org.apache.hadoop.mapred.JobClient.copyAndConfigureFiles(JobClient.java:724)

- at org.apache.hadoop.mapred.JobClient.copyAndConfigureFiles(JobClient.java:648)

原因:

从异常的内容来看,是由于使用了一个空字符串来创建一个Path对象。

经过分析发现,使用"--auxpath=abc.jar"来启动Hive时,Hive会自动在abc.jar前面补上"file://"。也就是说Hive最后使用的路径是"file://abc.jar"。

当我们使用"file://abc.jar"来生成一个Path时,调用这个Path的getName将会返回""(空字符串)。而Hive在提交MapReduce的Job时,会使用getName来获取文件名,并创建一个新的Path对象。下面的示例代码演示了一下这个过程,会抛出上文提到的异常。

- Path path = new Path("file://abc.jar");

- System.out.println("path name:" + path.getName());

- System.out.println("authority:" + path.toUri().getAuthority());

- Path newPath = new Path(path.getName());

上文的代码输出

path name:

authority:abc.jar

并抛出了异常"Can not create a Path from an empty string"

那么为什么"file://abc.jar"生成的Path的getName返回的是""而不是"abc.jar"呢,而且"abc.jar"却成了authority?在Path中的处理代码如下:

- if (pathString.startsWith("//", start) && (pathString.length()-start > 2)) { // has authority

- int nextSlash = pathString.indexOf('/', start+2);

- int authEnd = nextSlash > 0 ? nextSlash : pathString.length();

- authority = pathString.substring(start+2, authEnd);

- start = authEnd;

- }

- // uri path is the rest of the string -- query & fragment not supported

- String path = pathString.substring(start, pathString.length());

pathString就是传进去的"file://abc.jar",由于我们只有两个"/"因此,从第二个"/"到结尾的字符串("abc.jar")都被当成了authority,path(内部的成员)则设置成了""而getName返回的就是path,因此也就为""了。

解决:

如果使用Hive的--auxpath来设置jar,必须使用绝对路径,或者使用"file:///.abc.jar"这样的表示法。这个才是Hadoop的Path支持的方式。事实上,hadoop许多相关的Path的设置,都存在这个问题,所以在无法确定的情况下,就不要使用相对路径了。

6、启动hive hwi服务时出现 HWI WAR file not found错误

问题:

- hive --service hwi

- [niy@niy-computer /]$ $HIVE_HOME/bin/hive --service hwi

- 13/04/26 00:21:17 INFO hwi.HWIServer: HWI is starting up

- 13/04/26 00:21:18 FATAL hwi.HWIServer: HWI WAR file not found at /usr/local/hive/usr/local/hive/lib/hive-hwi-0.12.0-SNAPSHOT.war

原因:

可以看出/usr/local/hive/usr/local/hive/lib/hive-hwi-0.12.0-SNAPSHOT.war肯定不是正确路径,真正路径是/usr/local/hive/lib/hive-hwi-0.12.0-SNAPSHOT.war,断定是配置的问题

解决:将hive-default.xml中关于 hwi的设置拷贝到hive-site.xml中即可

- <property>

- <name>hive.hwi.war.file</name>

- <value>lib/hive-hwi-0.12.0-SNAPSHOT.war</value>

- <description>This sets the path to the HWI war file, relative to ${HIVE_HOME}. </description>

- </property>

- <property>

- <name>hive.hwi.listen.host</name>

- <value>0.0.0.0</value>

- <description>This is the host address the Hive Web Interface will listen on</description>

- </property>

- <property>

- <name>hive.hwi.listen.port</name>

- <value>9999</value>

- <description>This is the port the Hive Web Interface will listen on</description>

- </property>

7、问题:当启动Hive的时候报错:

- Caused by: javax.jdo.JDOException: Couldnt obtain a new sequence (unique id) : Cannot execute statement: impossible to write to binary log since BINLOG_FORMAT = STATEMENTandat least one table uses a storage engine limited to row-based logging.

- InnoDB is limited to row-logging when transaction isolation level is READ COMMITTED or READ UNCOMMITTED.

- NestedThrowables: java.sql.SQLException: Cannot execute statement: impossible to write to binary log since BINLOG_FORMAT = STATEMENT andat least one table uses a storage engine limited to row-based logging.

- InnoDB is limited to row-logging when transaction isolation level is READ COMMITTED or READ UNCOMMITTED.

原因:

这个问题是由于hive的元数据存储MySQL配置不当引起的

解决办法:

- mysql> setglobal binlog_format='MIXED';

8、问题:

当在Hive中创建表的时候报错:

- create table years (year string, eventstring) row format delimited fields terminated by'\t'; FAILED: Execution Error, return code 1from org.apache.hadoop.hive.ql.exec.DDLTask.

- MetaException(message:For direct MetaStore DB connections, we don't support retries at the client level.)

原因:

这是由于字符集的问题,需要配置MySQL的字符集:

解决办法:

- mysql> alter database hive character set latin1;

9、问题:

当执行Hive客户端时候出现如下错误:

WARN conf.HiveConf: HiveConf of name hive.metastore.localdoesnot exist

原因:这是由于在0.10 0.11或者之后的HIVE版本 hive.metastore.local 属性不再使用。

解决办法:

将该参数从hive-site.xml删除即可。

10、问题:

在启动Hive报如下错误:

(Permission denied: user=anonymous, access=EXECUTE, inode="/tmp":hadoop:supergroup:drwx------

原因:这是由于Hive没有hdfs:/tmp目录的权限,

解决办法:

赋权限即可:hadoop dfs -chmod -R 777 /tmp

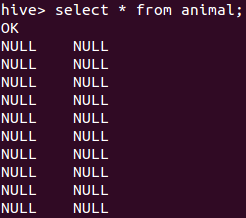

11、Hive查询数据库时,出现null。无数据显示。

问题如下:

解决办法:

- LOAD DATA LOCAL INPATH '/tmp/sanple.txt' overwrite into table animal FIELDS TERMINATED BY '\t';

解释:

数据分隔符的问题,定义表的时候需要定义数据分隔符,

- FIELDS TERMINATED BY '\t'

这个字段就说明了数据分隔符是tab。

具体分割符请以自己的文化中具体的情况来定。

12、ERROR beeline.ClassNameCompleter: Fail to parse the class name from the Jar file due to

在使用beeline链接Hive服务的时候,报了下面的这个错误:

- beeline> !connect jdbc:hive2//h2slave1:10000

- scan complete in 1ms

- 16/07/27 11:40:54 [main]: ERROR beeline.ClassNameCompleter: Fail to parse the class name from the Jar file due to the exception:java.io.FileNotFoundException: minlog-1.2.jar (没有那个文件或目录)

- 16/07/27 11:40:54 [main]: ERROR beeline.ClassNameCompleter: Fail to parse the class name from the Jar file due to the exception:java.io.FileNotFoundException: objenesis-1.2.jar (没有那个文件或目录)

- 16/07/27 11:40:54 [main]: ERROR beeline.ClassNameCompleter: Fail to parse the class name from the Jar file due to the exception:java.io.FileNotFoundException: reflectasm-1.07-shaded.jar (没有那个文件或目录)

- scan complete in 596ms

- No known driver to handle "jdbc:hive2//h2slave1:10000"

解决:

其实这个问题是由于jdbc协议地址写错造成的,在hive2之后少了个“:”

改成以下这个形式即可:

- beeline> !connect jdbc:hive2://h2slave1:10000

13、Missing Hive Execution Jar: /.../hive-exec-*.jar

运行hive时显示Missing Hive Execution Jar: /usr/hive/hive-0.11.0/bin/lib/hive-exec-*.jar

运行hive时显示Missing Hive Execution Jar: /usr/hive/hive-0.11.0/bin/lib/hive-exec-*.jar

细细分析这个目录/bin/lib,在hive安装文件夹中这两个目录是并列的,而系统能够找到这样的链接,说明hive在centos系统配置文件中的路径有误,打开 /etc/profile会发现hive的配置路径为

- export PATH=$JAVA_HOME/bin:$PATH:/usr/hive/hive-0.11.0/bin

明显可以看出是路径配置的问题,这样的配置系统会在hive安装文件夹中的bin目录下寻找它所需要的jar包,而bin和lib文件夹是并列的,所以我们需要在centos系统配置文件中将hive路径配置为文件夹安装路径,即

- export PATH=$JAVA_HOME/bin:$PATH:/usr/hive/hive-0.11.0

注意:这种问题一般都是出在环境变量上面的配置。请认真检查etc/profile跟你hive的安装路径。

14、hive启动报错: Found class jline.Terminal, but interface was expected

错误如下:

- [ERROR] Terminal initialization failed; falling back to unsupported

- java.lang.IncompatibleClassChangeError: Found class jline.Terminal, but interface was expected

- at jline.TerminalFactory.create(TerminalFactory.java:101)

- at jline.TerminalFactory.get(TerminalFactory.java:158)

- at jline.console.ConsoleReader.<init>(ConsoleReader.java:229)

- at jline.console.ConsoleReader.<init>(ConsoleReader.java:221)

- at jline.console.ConsoleReader.<init>(ConsoleReader.java:209)

- at org.apache.hadoop.hive.cli.CliDriver.getConsoleReader(CliDriver.java:773)

- at org.apache.hadoop.hive.cli.CliDriver.executeDriver(CliDriver.java:715)

- at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:675)

- at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:615)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

- at java.lang.reflect.Method.invoke(Method.java:606)

- at org.apache.hadoop.util.RunJar.main(RunJar.java:212)

原因:

jline版本冲突不一致导致的,hadoop目录下存在老版本jline:

/hadoop-2.6.0/share/hadoop/yarn/lib:

jline-0.9.94.jar

解决:

把hive中的新版本jline拷贝一份到hadoop的share/hadoop/yarn/lib即可

同时要把那个老版本的给删除

- cp /hive/apache-hive-1.1.0-bin/lib/jline-2.12.jar /hadoop-2.5.2/share/hadoop/yarn/lib

15、MySQLSyntaxErrorException: Specified key was too long; max key length is 767 bytes

在使用hive时,使用mysql存储元数据的时候,遇到下面的错误:

- com.mysql.jdbc.exceptions.jdbc4.MySQLSyntaxErrorException: Specified key was too long; max key length is 767 bytes

- at sun.reflect.GeneratedConstructorAccessor31.newInstance(Unknown Source)

- at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

- at java.lang.reflect.Constructor.newInstance(Constructor.java:526)

- at com.mysql.jdbc.Util.handleNewInstance(Util.java:377)

- at com.mysql.jdbc.Util.getInstance(Util.java:360)

解决办法:

用mysql做元数据,要修改数据字符集

- alter database hive character set latin1

【本文为51CTO专栏作者“王森丰”的原创稿件,转载请注明出处】