回复

RAG高级优化:基于问题生成的文档检索增强 原创

我们将在本文中介绍一种文本增强技术,该技术利用额外的问题生成来改进矢量数据库中的文档检索。通过生成和合并与每个文本片段相关的问题,增强系统标准检索过程,从而增加了找到相关文档的可能性,这些文档可以用作生成式问答的上下文。

实现步骤

通过用相关问题丰富文本片段,我们的目标是显著提高识别文档中包含用户查询答案的最相关部分的准确性。具体的方案实现一般包含以下步骤:

- 文档解析和文本分块:处理PDF文档并将其划分为可管理的文本片段。

- 问题增强:使用语言模型在文档和片段级别生成相关问题。

- 矢量存储创建:使用向量模型计算文档的嵌入,并创建FAISS矢量存储。

- 检索和答案生成:使用FAISS查找最相关的文档,并根据提供的上下文生成答案。

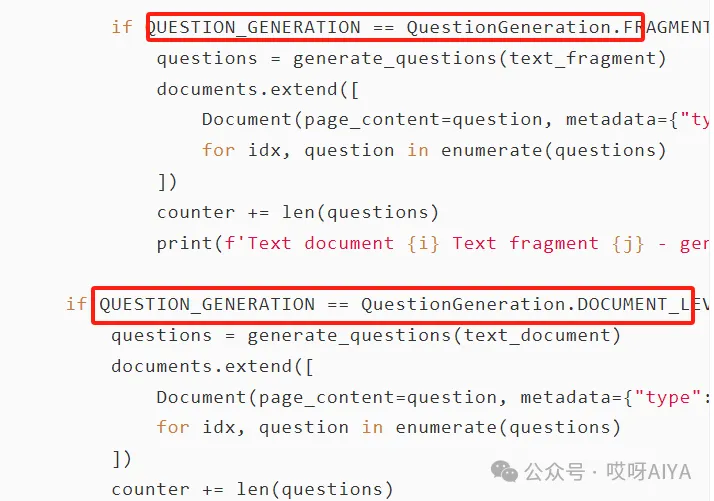

我们可以通过设置,指定在文档级或片段级进行问题增强。

方案实现

问题生成

处理主流程

该技术为提高基于向量的文档检索系统的信息检索质量提供了一种方法。此实现使用了大模型的API,这可能会根据使用情况产生成本。

本文转载自公众号哎呀AIYA

原文链接:https://mp.weixin.qq.com/s/bjI02uOeAGXSelCApb0yOQ

©著作权归作者所有,如需转载,请注明出处,否则将追究法律责任

标签

已于2024-9-14 14:18:55修改

赞

收藏

回复

相关推荐